It might seem ironic to focus so much on broker-based reliability, when we often explain ÃMQ as âbrokerless messaging.â However, in messaging, as in real life, the middleman is both a burden and a benefit. In practice, most messaging architectures benefit from a mix of distributed and brokered messaging. You get the best results when you can decide freely what trade-offs you want to make. This is why I can drive 20 minutes to a wholesaler to buy five cases of wine for a party, but I can also walk 10 minutes to a corner store to buy one bottle for a dinner. Our highly context-sensitive relative valuations of time, energy, and cost are essential to the real-world economy. And they are essential to an optimal message-based architecture.

This is why ÃMQ does not impose a broker-centric architecture, though it does give you the tools to build brokers, aka proxies (and weâve built a dozen or so different ones so far, just for practice).

So, weâll end this chapter by deconstructing the broker-based reliability weâve built so far, and turning it back into a distributed peer-to-peer architecture I call the Freelance pattern. Our use case will be a name resolution service. This is a common problem with ÃMQ architectures: how do we know which endpoint to connect to? Hard-coding TCP/IP addresses in code is insanely fragile. Using configuration files creates an administration nightmare. Imagine if you had to hand-configure your web browser, on every PC or mobile phone you used, to realize that âgoogle.comâ was â74.125.230.82.â

A ÃMQ name service (weâll make a simple implementation) must do the following:

Resolve a logical name into at least a bind endpoint and a connect endpoint. A realistic name service would provide multiple bind endpoints, and possibly multiple connect endpoints as well.

Allow us to manage multiple parallel environmentsâe.g., âtestâ versus âproductionââwithout modifying code.

Be reliable, because if it is unavailable, applications wonât be able to connect to the network.

Putting a name service behind a service-oriented Majordomo broker is clever from some points of view. However, itâs simpler and much less surprising to just expose the name service as a server to which clients can connect directly. If we do this right, the name service becomes the only global network endpoint we need to hard-code in our code or configuration files.

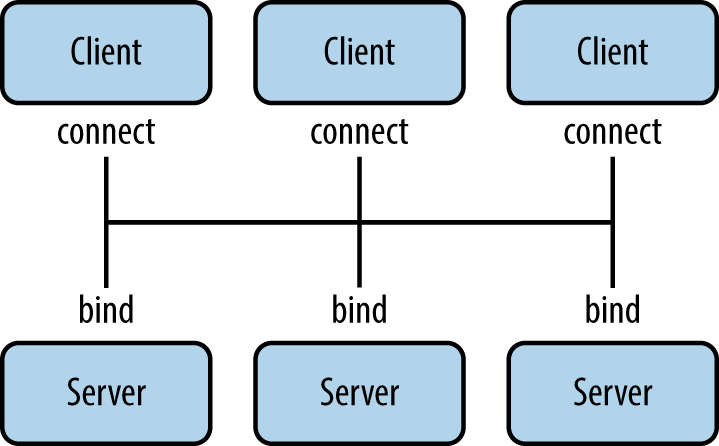

The types of failure we aim to handle are server crashes and restarts, server busy looping, server overload, and network issues. To get reliability, weâll create a pool of name servers so if one crashes or goes away, clients can connect to another, and so on. In practice, two would be enough, but for this example weâll assume the pool can be any size (Figure 4-9).

In this architecture, a large set of clients connect to a small set of servers directly. The servers bind to their respective addresses. Itâs fundamentally different from a broker-based approach like Majordomo, where workers connect to the broker. Clients have a couple of options:

Use REQ sockets and the Lazy Pirate pattern. Easy, but would need some additional intelligence so clients donât stupidly try to reconnect to dead servers over and over.

Use DEALER sockets and blast out requests (which will be load-balanced to all connected servers) until they get a reply. Effective, but not elegant.

Use ROUTER sockets so clients can address specific servers. But how does the client know the identity of the server sockets? Either the server has to ping the client first (complex), or the server has to use a hard-coded, fixed identity known to the client (nasty).

Weâll develop each of these in the following subsections.

Get ZeroMQ now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.