Chapter 3. Keys to Injecting Security into DevOps

Now letâs look at how to solve these problems and challenges, and how you can wire security and compliance into DevOps.

Shift Security Left

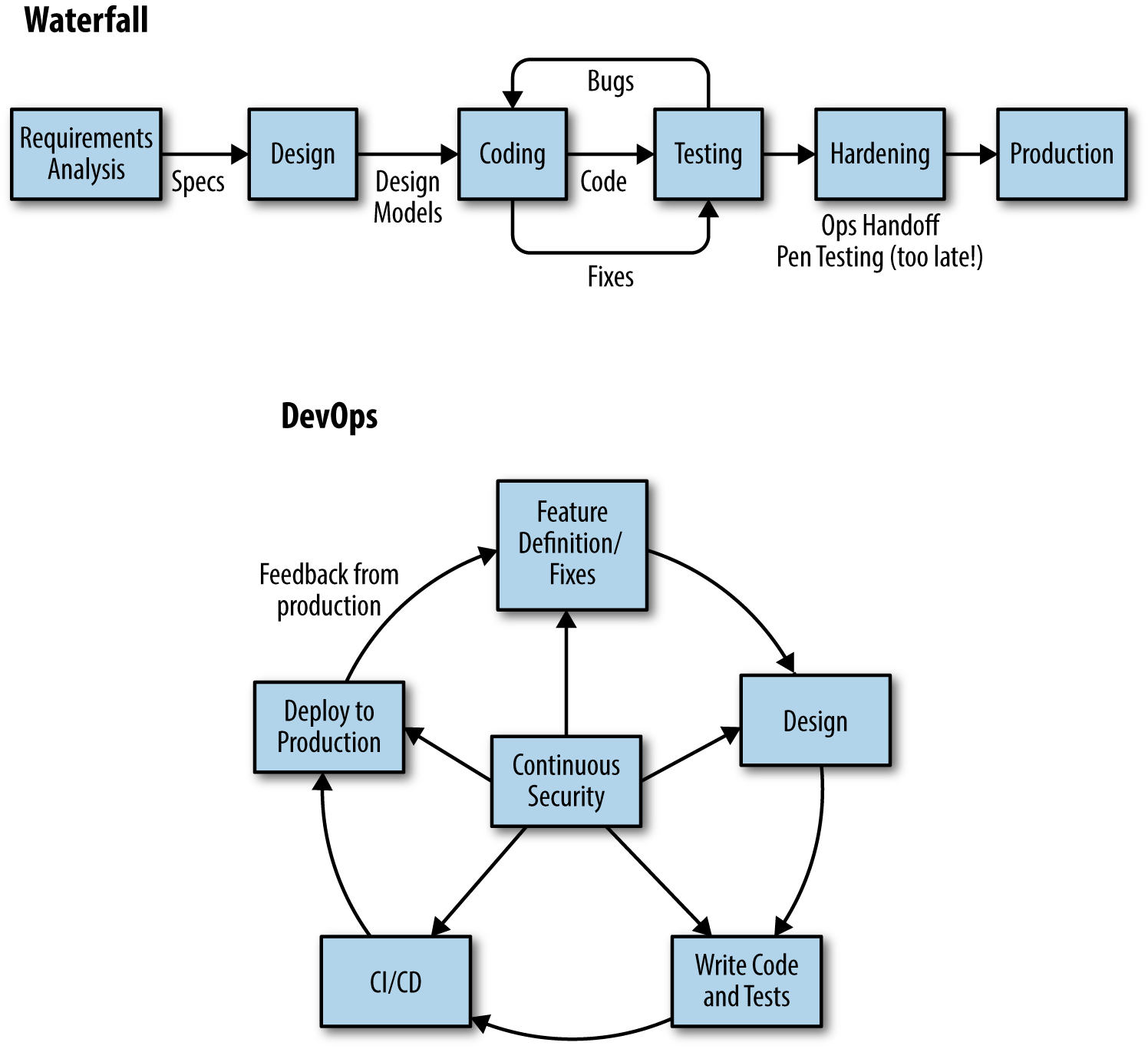

To keep up with the pace of Continuous Delivery, security must âshift left,â earlier into design and coding and into the automated test cycles, instead of waiting until the system is designed and built and then trying to fit some security checks just before release. In DevOps, security must fit into the way that engineers think and work: more iterative and incremental, and automated in ways that are efficient, repeatable, and easy to use. See Figure 3-1 for a comparison between waterfall delivery and the DevOps cycle.

Figure 3-1. The waterfall cycle versus the DevOps cycle

Some organizations do this by embedding infosec specialists into development and operations teams. But it is difficult to scale this way. There are too few infosec engineers to go around, especially ones who can work at the design and code level. This means that developers and operations need to be given more responsibility for security, training in security principles and practices, and tools to help them build and run secure systems.

Developers need to learn how to identify and mitigate security risks in design through threat modeling (looking at holes or weaknesses in the design from an attackerâs perspective), and how to take advantage of security features in their application frameworks and security libraries to prevent common security vulnerabilities like injection attacks. The OWASP and SAFECode communities provide a lot of useful, free tools and frameworks and guidance to help developers with understanding and solving common application security problems in any kind of system.

OWASP Proactive Controls

The OWASP Proactive Controls is a set of secure development practices, guidelines, and techniques that you should follow to build secure applications. These practices will help you to shift security earlier into design, coding, and testing:

- Verify for security early and often

- Instead of leaving security testing and checks to the end of a project, include security in automated testing in Continuous Integration and Continuous Delivery.

- Parameterize queries

- Prevent SQL injection by using a parameterized database interface.

- Encode data

- Protect the application from XSS and other injection attacks by safely encoding data before passing it on to an interpreter.

- Validate all inputs

- Treat all data as untrusted. Validate parameters and data elements using white listing techniques. Get to know and love regex.

- Implement identity and authentication controls

- Use safe, standard methods and patterns for authentication and identity management. Take advantage of OWASPâs Cheat Sheets for authentication, session management, password storage, and forgotten passwords if you need to build these functions in on your own.

- Implement appropriate access controls

- Follow a few simple rules when implementing access control filters. Deny access by default. Implement access control in a central filter libraryâdonât hardcode access control checks throughout the application. And remember to code to the activity instead of to the role.

- Protect data

- Understand how to use crypto properly to encrypt data at rest and in transit. Use proven encryption libraries like Googleâs KeyCzar and Bouncy Castle.

- Implement logging and intrusion detection

- Design your logging strategy with intrusion detection and forensics in mind. Instrument key points in your application and make logs safe and consistent.

- Take advantage of security frameworks and libraries

- Take advantage of the security features of your application framework where possible, and fill in with special-purpose security libraries like Apache Shiro or Spring Security where you need to.

- Error and exception handling

- Pay attention to error handling and exception handling throughout your application. Missed error handling can lead to runtime problems, including catastrophic failures. Leaking information in error handling can provide clues to attackers; donât make their job easier than it already is.

Secure by Default

Shifting Security Left begins by making it easy for engineers to write secure code and difficult for them to make dangerous mistakes, wiring secure defaults into their templates and frameworks, and building in the proactive controls listed previously. You can prevent SQL injection at the framework level by using parameterized queries, hide or simplify the output encoding work needed to protect applications from XSS attacks, enforce safe HTTP headers, and provide simple and secure authentication functions. You can do all of this in ways that are practically invisible to the developers using the framework.

Making software and software development secure by default is core to the security programs at organizations like Google, Facebook, Etsy, and Netflix. Although the pay back can be huge, it demands a fundamental change in the way that infosec and development work together. It requires close collaboration between security engineers and software engineers, strong application security knowledge and software design, and coding skills to build security protection into frameworks and templates in ways that are safe and easy to use. Most important, it requires a commitment from developers and their managers to use these frameworks wherever possible.

Most of us arenât going to be able to start our application security programs here; instead, weâll need to work our way back to the beginning and build more security into later stages.

Making Security Self-Service

Engineers in DevOps shops work in a self-service environment. Automated Continuous Integration servers provide self-service builds and testing. Cloud platforms and virtualization technologies like Vagrant provide self-service, on-demand provisioning. Container technologies like Docker offer simple, self-service packaging and shipping.

Security needs to be made available to the team in the same way: convenient, available when your engineers need it, seamless, and efficient.

Donât get in their way. Donât make developers wait for answers or stop work in order to get help. Give them security tools that they can use and understand and that they can provision and run themselves. And ensure that those tools fit into how they work: into Continuous Integration and Continuous Delivery, into their IDEs as they enter code, into code pull requests. In other words, ensure that tests and checks provide fast, clear feedback.

At Twitter, security checks are run automatically every time a developer makes a change. Tools like Brakeman check the code for security vulnerabilities, and provide feedback directly to the developer if something is found, explaining what is wrong, why it is wrong, and how to fix it. Developers have a âbullshitâ button to reject false positive findings. Security checks become just another part of their coding cycle.2

Using Infrastructure as Code

Another key to DevOps is Infrastructure as Code: managing infrastructure configuration in code using tools like Ansible, Chef, Puppet, and Salt. This speeds up and simplifies provisioning and configuring systems, especially at scale. It also helps to ensure consistency between systems and between test and production, standardizing configurations and minimizing configuration drift, and reduces the chance of an attacker finding and exploiting a one-off mistake.

Treating infrastructure provisioning and configuration as a software problem and following software engineering practices like version control, peer code reviews, refactoring, static analysis, automated unit testing and Continuous Integration, and validating and deploying changes through the same kind of Continuous Delivery pipelines makes configuration changes repeatable, auditable, and safer.

Security teams also can use Infrastructure as Code to program security policies directly into configuration, continuously audit and enforce policies at runtime, and to patch vulnerabilities quickly and safely.

Iterative, Incremental Change to Contain Risks

DevOps and Continuous Delivery reduce the risk of change by making many small, incremental changes instead of a few âbig bangâ changes.

Changing more often exercises and proves out your ability to test and successfully push out changes, enhancing your confidence in your build and release processes. Additionally, it forces you to automate and streamline these processes, including configuration management and testing and deployment, which makes them more repeatable, reliable, and auditable.

Smaller, incremental changes are safer by nature. Because the scope of any change is small and generally isolated, the âblast radiusâ of each change is contained. Mistakes are also easier to catch because incremental changes made in small batches are easier to review and understand upfront, and require less testing.

When something does go wrong, it is also easier to understand what happened and to fix it, either by rolling back the change or pushing a fix out quickly using the Continuous Delivery pipeline.

Itâs also important to understand that in DevOps many changes are rolled out dark. That is, they are disabled by default, using runtime âfeature flagsâ or âfeature togglesâ. These features are usually switched on only for a small number of users at a time or for a short period, and in some cases only after an âoperability reviewâ or premortem review to make sure that the team understands what to watch for and is prepared for problems.3

Another way to minimize the risk of change in Continuous Delivery or Continuous Deployment is canary releasing. Changes can be rolled out to a single node first, and automatically checked to ensure that there are no errors or negative trends in key metrics (for example, conversion rates), based on âthe canary in a coal mineâ metaphor. If problems are found with the canary system, the change is rolled back, the deployment is canceled, and the pipeline shut down until a fix is ready to go out. After a specified period of time, if the canary is still healthy, the changes are rolled out to more servers, and then eventually to the entire environment.

Use the Speed of Continuous Delivery to Your Advantage

The speed at which DevOps moves can seem scary to infosec analysts and auditors. But security can take advantage of the speed of delivery to respond quickly to security threats and deal with vulnerabilities.

A major problem that almost all organizations face is that even when they know that they have a serious security vulnerability in a system, they canât get the fix out fast enough to stop attackers from exploiting the vulnerability.

The longer vulnerabilities are exposed, the more likely the system will be, or has already been, attacked. WhiteHat Security, which provides a service for scanning websites for security vulnerabilities, regularly analyzes and reports on vulnerability data that it collects. Using data from 2013 and 2014, WhiteHat found that 35 percent of finance and insurance websites are âalways vulnerable,â meaning that these sites had at least one serious vulnerability exposed every single day of the year. The stats for other industries and government organizations were even worse. Only 25 percent of finance and insurance sites were vulnerable for less than 30 days of the year. On average, serious vulnerabilities stayed open for 739 days, and only 27 percent of serious vulnerabilities were fixed at all, because of the costs and risks and overhead involved in getting patches out.

Continuous Delivery, and collaboration between developers and operations and infosec staff working closely together, can close vulnerability windows. Most security patches are small and donât take long to code. A repeatable, automated Continuous Delivery pipeline means that you can figure out and fix a security bug or download a patch from a vendor, test to make sure that it wonât introduce a regression, and get it out quickly, with minimal cost and risk. This is in direct contrast to âhot fixesâ done under pressure that have led to failures in the past.

Speed also lets you make meaningful risk and cost trade-off decisions. Recognizing that a vulnerability might be difficult to exploit, you can decide to accept the risk temporarily, knowing that you donât need to wait for several weeks or months until the next release, and that the team can respond quickly with a fix if it needs to.

Speed of delivery now becomes a security advantage instead of a source of risk.

The Honeymoon Effect

There appears to be another security advantage to moving fast in DevOps. Recent research shows that smaller, more frequent changes can make systems safer from attackers by means of the âHoneymoon Effectâ: older software that is more vulnerable is easier to attack than software that has recently been changed.

Attacks take time. It takes time to identify vulnerabilities, time to understand them, and time to craft and execute an exploit. This is why many attacks are made against legacy code with known vulnerabilities. In an environment where code and configuration changes are rolled out quickly and changed often, it is more difficult for attackers to follow what is going on, to identify a weakness, and to understand how to exploit it. The system becomes a moving target. By the time attackers are ready to make their move, the code or configuration might have already been changed and the vulnerability might have been moved or closed.

To some extent relying on change to confuse attackers is âsecurity through obscurity,â which is generally a weak defensive position. But constant change should offer an edge to fast-moving organizations, and a chance to hide defensive actions from attackers who have gained a foothold in your system, as Sam Guckenheimer at Microsoft explains:

If youâre one of the bad guys, what do you want? You want a static network with lots of snowflakes and lots of places to hide that arenât touched. And if someone detects you, you want to be able to spot the defensive action so that you can take countermeasures.... With DevOps, you have a very fast, automated release pipeline, youâre constantly redeploying. If you are deploying somewhere on your net, it doesnât look like a special action taken against the attackers.

1 Rich Smith, Director of Security Engineering, Etsy. âCrafting an Effective Security Organization.â QCon 2015 http://www.infoq.com/presentations/security-etsy

2 âPut your Robots to Work: Security Automation at Twitter.â OWASP AppSec USA 2012, https://vimeo.com/54250716

3 http://www.slideshare.net/jallspaw/go-or-nogo-operability-and-contingency-planning-at-etsycom

Get DevOpsSec now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.