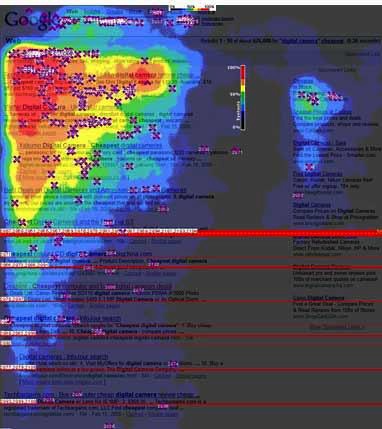

Research firms Enquiro, Eyetools, and Didit conducted heat-map testing with search engine users (http://www.enquiro.com/research/eyetrackingreport.asp) that produced fascinating results related to what users see and focus on when engaged in search activity. Figure 1-8 depicts a heat map showing a test performed on Google. The graphic indicates that users spent the most amount of time focusing their eyes in the top-left area, where shading is the darkest.

Published in November 2006, this particular study perfectly illustrates how little attention is paid to results lower on the page versus those higher up, and how users’ eyes are drawn to bold keywords, titles, and descriptions in the natural (“organic”) results versus the paid search listings, which receive comparatively little attention.

This research study also showed that different physical positioning of on-screen search results resulted in different user eye-tracking patterns. When viewing a standard Google results page, users tended to create an “F-shaped” pattern with their eye movements, focusing first and longest on the upper-left corner of the screen, then moving down vertically through the first two or three results, across the page to the first paid page result, down another few vertical results, and then across again to the second paid result. (This study was done only on left-to-right language search results—results for Chinese, Hebrew, and other non-left-to-right-reading languages would be different.)

In May 2008, Google introduced the notion of Universal Search. This was a move from simply showing the 10 most relevant web pages (now referred to as “10 blue links”) to showing other types of media, such as videos, images, news results, and so on, as part of the results in the base search engine. The other search engines followed suit within a few months, and the industry now refers to this general concept as Blended Search.

Blended Search, however, creates more of a chunking effect, where the chunks are around the various rich media objects, such as images or video. Understandably, users focus on the image first. Then they look at the text beside it to see whether it corresponds to the image or video thumbnail (which is shown initially as an image). Based on an updated study published by Enquiro in September 2007, Figure 1-9 shows what the eye-tracking pattern on a Blended Search page looks like.

Users’ eyes then tend to move in shorter paths to the side, with the image rather than the upper-left-corner text as their anchor. Note, however, that this is the case only when the image is placed “above the fold,” so that the user can see it without having to scroll down on the page. Images below the fold do not influence initial search behavior until the searcher scrolls down.

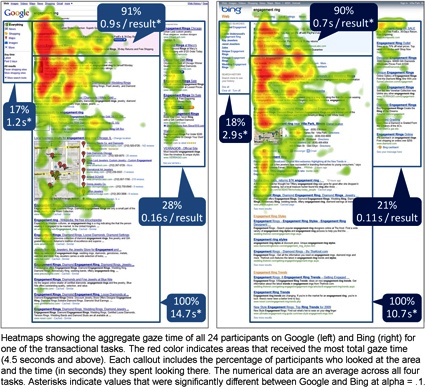

A more recent study performed by User Centric in January 2011 (http://www.usercentric.com/news/2011/01/26/eye-tracking-bing-vs-google-second-look) shows similar results, as shown in Figure 1-10.

In 2010, Enquiro investigated the impact of Google Instant on search usage and attention (http://ask.enquiro.com/2010/eye-tracking-google-instant/), noting that for queries typed in their study:

Percent of query typed decreased in 25% of the tasks, with no change in the others

Query length increased in 17% of the tasks, with no change in the others

Time to click decreased in 33% of the tasks and increased in 8% of the tasks

These studies are a vivid reminder of how important search engine results pages (SERPs) really are. And as the eye-tracking research demonstrates, “rich” or “personalized” search, as it evolves, will alter users’ search patterns even more: there will be more items on the page for them to focus on, and more ways for them to remember and access the search listings. Search marketers need to be prepared for this as well. The Search, plus Your World announcement in January of 2012 will also have a profound impact on the results, but no studies on that impact have been done as of February 2012.

Get The Art of SEO, 2nd Edition now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.