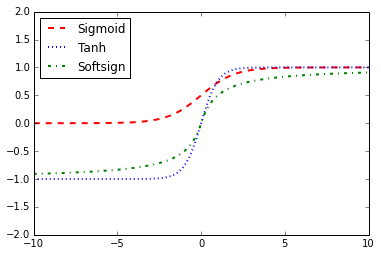

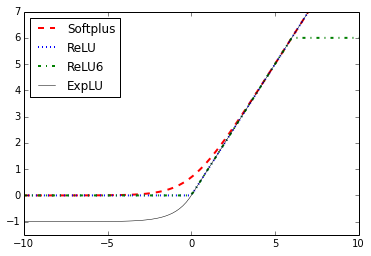

Here are two graphs that illustrate the different activation functions. The following graphs show the ReLU, ReLU6, softplus, exponential LU, sigmoid, softsign, and hyperbolic tangent functions:

Here, we can see four of the activation functions: softplus, ReLU, ReLU6, and exponential LU. These functions flatten out to the left of zero and linearly increase to the right of zero, with the exception of ReLU6, which has a maximum value of six: