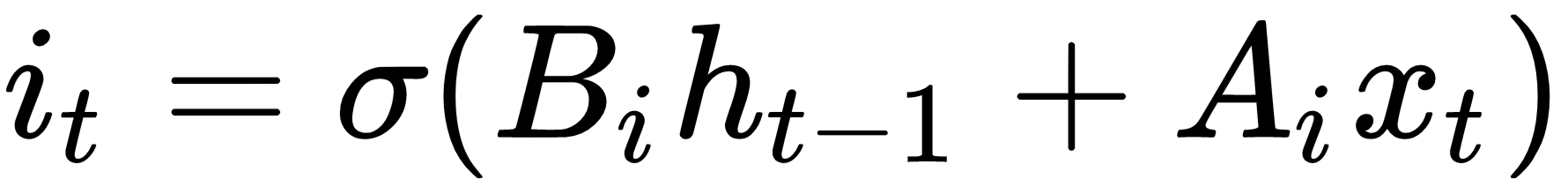

Long Short Term Memory (LSTM) is a variant of the traditional RNN. LSTM is a way to address the vanishing/exploding gradient problem that variable length RNNs have. To address this issue, LSTM cells introduce an internal forget gate, which can modify a flow of information from one cell to the next. To conceptualize how this works, we will walk through an unbiased version of LSTM one equation at a time. The first step is the same as for the regular RNN:

In order to figure out which values we want to forget or pass through, we will evaluate candidate values as follows. These values are often referred to as memory cells:

Now we ...