Chapter 1. Introduction

What Is Salt?

Salt is a remote execution framework and configuration management system. It is similar to Chef, Puppet, Ansible, and cfengine. These systems are all written to solve the same basic problem: how do you maintain consistency across many machines, whether it is 2 machines or 20,000? What makes Salt different is that it accomplishes this high-level goal via a very fast and secure communication system. Its high-speed data bus allows Salt to manage just a few hosts or even a very large environment containing thousands of hosts. This is the very backbone of Salt. Once this encrypted communication channel is established, many more options open up. On top of this authenticated event bus is the remote execution engine. Then, continuing to build on existing layers, comes the state system. The state system uses the remote execution system, which, in turn, is layered on top of the secure event bus. This layering of functionality is what makes Salt so powerful.

But this is just the core of what Salt provides. Salt is written in Python, and its execution framework is just more Python. The default configuration uses a standard data format, YAML. Salt comes with a couple of other options if you don’t want to use YAML. The power of Salt is in its extensibility. Most of Salt can easily be customized—everything from the format of the data files to the code that runs on each host to how data is exchanged. Salt provides powerful application programming interfaces (APIs) and easy ways to layer new code on top of existing modules. This code can either be run on the centralized coordination host (aka the master) or on the clients themselves (aka the minions). Salt will do the “heavy lifting” of determining which host should run which code based on a number of different targeting options.

High-Level Architecture

There are a few key terms that you need to understand before moving on. First, all of your hosts are called minions. Actions performed on them are usually coordinated via a centralized machine called the master. As a host, the master is also a minion to itself. In most cases, you will initiate commands on the master giving a target, the command to run, and any arguments. Salt will expand the target into a list of minions. In the simplest case, the target can be a single minion specified by its minion ID. You can also list several minion IDs, or use globs to provide some pattern to match against. (For example, a simple * will match all minions.) You can even reach further into the minion’s data and target based on the operating system, or the number of CPUs, or any custom metadata you set.

The basic design is a very simple client/server model. Salt runs as a daemon, or background, process. This is true for both the master and the minion. When the master process comes up, it provides a location (socket) where minions can “bind” and watch for commands. The minion is configured with the location—that is, the domain name system (DNS) or IP address—of the master. When the minion daemon starts, it connects to that master socket and listens for events. As previously mentioned, each minion has an ID. This ID must be unique so that the master can exchange data with only that minion, if desired. This ID is usually the hostname, but can be configured as something else. Once the minion connects to the master, there is an initial “handshake” process where the master needs to confirm that the minion matches the ID it is advertising.1

In the default case, this means you will need to manually confirm the minion. Once the minion ID is established, the master and minion can communicate along a ZeroMQ2 data bus. When the master sends out a command to ZeroMQ, it is said to “publish” events, and when the minions are listening to the data bus, they are said to “subscribe” to, or listen for, those events—hence the descriptor pub-sub.

When the master publishes a command, it simply puts it on the ZeroMQ bus for all of the minions to see. Each minion will then look at the command and the target (and the target type) to determine if it should run that command. If the minion determines that it does not match the combination of target and target type, then it will simply ignore that command. When the master sends a command out to the minions, it relies on the minions being able to identify the command via the target.

Note

We have glossed over some details here.

While the minions do listen on the data bus to match their ID

and associated data (e.g., grains3) to the target

(using the target type to determine which set of data to use),

the master will verify that the target given does or does not match

any minions.

In the case of a match against the name (e.g., glob, regex, or simple list),

the master will match the target with a list of IDs it has

(via salt-key).

But, for grain matching, the master will look at a local cache

of the grains to determine if any minions match.

This is similar for pillar4 matching and for matching by IP address. All of that logic allows the master to build a list of minions that

should respond to a given command.

The master can then compare that list against the list of minions

that return data and thus identify which minions did not respond in time.

Also, the master can determine that no minions match

the criteria given (target combined with target type)

and thus not send any command.

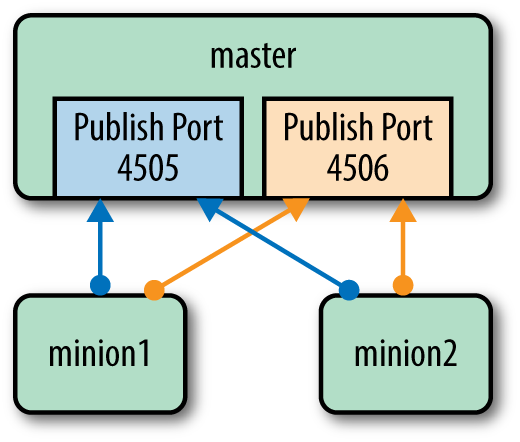

This is only half of the communication. Once a minion has decided to execute the given command, it will return data to the master (see Figure 1-1). The first part of the communication, where the minions are listening for commands, is called publish and subscribe, or pub-sub. The minions all connect to a single port on the master to listen for these commands. But there is a second port on the master that all minions send back any data. This includes whether the command succeeded or not, and a variety of other data.

Figure 1-1. Communication between master and minions

This remote execution framework provides the basic toolset upon which other functionality is built. The most notable example is salt states. States are a way to manage configurations across all of your minions. A salt state defines how you want a given host to be configured. For example, you might want a list of packages installed on a specific type of machine—for example, all web servers. Or maybe you want to have a number of users added on a shared development server. The state has those requirements enumerated, normally using YAML. Once you have the configuration defined, you give the state system the minions for which you want that particular configuration applied. The minions are defined through the same flexible targeting system mentioned earlier. A salt state gives you a very flexible way to define a “template” for setting up a given host.

The last important architectural cornerstone of Salt is that all of the communication is done via a secure, encrypted channel. Earlier, we briefly mentioned that when a minion first connects to the master, there is a process whereby the minion is validated. The default process is that you must view the list and manually accept the known minions. Once the minion is validated, the minion and master exchange encryption keys. The encryption uses the industry-standard AES specification. The master will store the public key of every minion. It is therefore critical that you maintain tight security control on your master. Once the trust relationship is established, any communication between the master and all minions is secure. However, this security is dependent on that initial setup of trust and on the sustained security of the master. The minions, on the other hand, do not have any global secrets. If a minion is compromised, it will be able to watch the ZeroMQ data bus and see commands sent out to the minions. But that is all it will be able to do. The net result is that all data sent between the master and its minions remains secure. But while the communication channel is kept secure, you still need to maintain a tight security profile on your master.

Some Quick Examples

Let’s run through a couple of quick examples so you can see what Salt can do.

System Management

A common use case for a remote execution framework is to install packages. With Salt, a single command can be used to install (or upgrade) packages across your entire infrastructure. With its powerful targeting syntax, you can install a package on all hosts, or only on CentOS 5.2 hosts, or maybe only on hosts with 24 CPUs.

Here is a simple example:

salt '*' pkg.install apache

This installs the Apache package on every host (*).

If you want to target a list of minions based on information

about the host (e.g., the operating system or some hardware attribute), you do so by using some data that the master keeps about each minion.

This data coming from the minion (e.g., operating system) is called

grains.

But there is another type of data: pillar data.

While grains are advertised by the minion back to the master,

pillar data is stored on the master and is made available to each

minion individually; that is, a minion cannot see any pillar data but its own.

It is common for people new to Salt to ask about grains versus pillar data, so we will discuss them further in Chapter 5. For the moment, you can think of grains as metadata about the host

(e.g., number of CPUs), while pillar is data the host needs

(e.g., a database password). In other words, a minion tells the master what its grains are,

while the minion asks the master for its pillar data.

For now, just know that you can use either to define the

target for a command.

Configuration Management

The central master can distribute files that describe how a system should be configured. As we’ve discussed, these descriptions are called states, and they are stored in simple YAML files called SLS (salt states). A state to manage the main index file for Apache might look like the following:

webserver_main_index_file:file.managed:-name:/var/www/index.html-source:salt://webserver/main.html

The first line is simply a unique identifier. Next comes the command to enforce. The description (i.e., state) says that a file is managed by Salt. The source of the file is on the Salt master in the location given. (Salt comes with a very lightweight file server that can manage files it needs—for example, configuration files, Java WAR files, or Windows installers.) The next two lines describe where the file should end up on the minion (/var/www/index.html), and where on the master to find the file (…/webserver/main.html). (The path for the source of the file is relative to the file root for the master. That will be explained later, but just know that the source is not an absolute file path, while the destination is an absolute path.)

Note

The file server is a mechanism for Salt to send files out to the minions. Larger files will be broken up into chunks to be more easily sent over the encrypted communication channel. This makes the file server very handy. But keep in mind that Salt’s file server is not meant to be a generic file server like NFS or CIFS.

Salt comes with a number of built-in state modules to help create

the descriptions that define how an entire host should be configured.

The file state module is just a simple introduction.

You can also define the users that should be present, the services (applications) that should be running,

and which packages should be installed.

Not only is there a wealth of state modules built in to Salt,

but you can also write your own, which we’ll cover in Chapter 6.

You may have noticed that when we installed the package using the execution module directly, we gave a target host: every host (*). But when we showed the state, there was no target minion given. In the state system, there is high-level abstraction that specifies which host should have which states. This is called the top file. While the states give a recipe for how a host should look, the top file says which hosts should have which recipes. We will discuss this in much more detail in Chapter 4.

A Brief History

Like many projects and ideas, Salt was born out of necessity. I (Tom) had created a couple of in-house remote execution incarnations over the years. But I found that these and the other open sourced options didn’t quite have the power I was looking for. I then decided to base a new system on the fast ZeroMQ messaging layer. As I began adding more and more functionality, the state system just naturally appeared. Then, as the community grew, more and more functionality was added. But the core remote execution framework remained extensible.

Topology Options

Thus far, we have discussed Salt only as a single master with a number of connected minions. However, this is not the only option. You can divide up your minions and have them talk to an intermediate host called a syndication master. An example use case is when you have clusters of hosts that are geographically dispersed. You may have high-latency links between the clusters, but each cluster has a fast network locally. For example, you have a bunch of hosts in New York, another large cluster in Sydney, maybe another grouping in London, and, finally, all of your development in San Francisco. A syndication master will act as a proxy for the master.

You may even decide that you only want to use Salt’s execution modules and states without any master at all. A masterless minion setup is briefly discussed in “Masterless Minions”.

Lastly, you may want to allow some users to harness the power Salt provides without giving them access directly to the main master. The peer publisher system allows you to give special access to some minions. This could allow you to let developers run deployment commands without giving them access to the entire set of tools that Salt provides.

Tip

The various topologies mentioned here are not necessarily mutually exclusive. You can use them individually, or even mix and match them. For example, you could have the majority of your infrastructure managed using the standard master–minion topology, but then have your more security-sensitive host managed via a masterless setup. Salt’s basic usage and core functionality remain the same; only the implementation details differ.

Extending Salt

Out of the box, Salt is extremely powerful and comes with a number of modules to help you administer a variety of operating systems. However, no matter how powerful the system is or how complete it attempts to be, it cannot be all things to all people. As a result, Salt’s extensibility underpins the entire system. You can dynamically generate the data in the configuration files using a templating engine (e.g., Jinja or Mako), a DSL, or just straight code. Or you can write your own custom execution modules using Python. Salt provides a number of libraries and data structures, which allow custom modules to peer into the core of the Salt system to extract data or even run other modules. Once you have the concept of extending using modules, you can then write your own states to enforce whatever logic you see fit.

As powerful as custom modules or custom states may be, they are only the beginning of what you can change. As previously mentioned, the format of the state files is YAML. But you can add your own renderer to convert any data file into a data structure that Salt can handle. Even the data about a host (i.e., grains and pillar) can be altered and customized.

All of these customizations do not live in their own sandbox. They are available to the rest of Salt, and the rest of Salt is available to them. Thus, you can write your own custom execution module and call it using the state system. Or you can write your own state that uses only the modules that ship with Salt.

All of this makes Salt very powerful and a bit overwhelming. This book is here to guide you through the basics and give some very simple examples of what Salt can do. Just to sweeten the pot, Salt has a very active community that is here to help you when you run into obstacles.

Are you ready to get salted?

1 This handshake between the minions and the master is the same as the handshake used by SSH. But the handshake for Salt is simply implemented on top of ZeroMQ.

2 ZeroMQ is an open source, asynchronous messaging library aimed at large, distributed systems.

3 Grains are data about the minions, stored on the minions. They are discussed at length in Chapter 5.

4 Pillar is data about the minion stored on the master. This is also discussed at length in Chapter 5.

Get Salt Essentials now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.