The most powerful processor in your computer may not be the CPU. Modern graphics processing units (GPUs) usually have something on the order of 10 to 100 times more raw compute power than the general-purpose CPU. However, the GPU is a very different beast from the CPU, and we can’t just run ordinary Haskell programs on it. A GPU consists of a large number of parallel processing units, each of which is much less powerful than one core of your CPU, so to unlock the power of a GPU we need a highly parallel workload. Furthermore, the processors of a GPU all run exactly the same code in lockstep, so they are suitable only for data-parallel tasks where the operations to perform on each data item are identical.

In recent years GPUs have become less graphics-specific and more suitable for performing general-purpose parallel processing tasks. However, GPUs are still programmed in a different way from the CPU because they have a different instruction set architecture. A special-purpose compiler is needed to compile code for the GPU, and the source code is normally written in a language that resembles a restricted subset of C. Two such languages are in widespread use: NVidia’s CUDA and OpenCL. These languages are very low-level and expose lots of details about the workings of the GPU, such as how and when to move data between the CPU’s memory and the GPU’s memory.

Clearly, we would like to be able to make use of the vast computing power of the GPU from Haskell without having to write code in CUDA or OpenCL. This is where the Accelerate library comes in: Accelerate is an embedded domain-specific language (EDSL) for programming the GPU. It allows us to write Haskell code in a somewhat stylized form and have it run directly on the GPU. For certain tasks, we can obtain orders of magnitude speedup by using Accelerate.

During the course of this chapter, I’ll be introducing the various concepts of Accelerate, starting with the basic data types and operations and progressing to full-scale examples that run on the GPU.

As with Repa in the previous chapter, I’ll be illustrating many of

the Accelerate operations by typing expressions into GHCi.

Accelerate comes with an interpreter, which means that for

experimenting with Accelerate code, you don’t need a machine with a

GPU. To play with examples yourself, first make sure the accelerate

package is installed:

$ cabal install accelerate

The accelerate package provides the basic infrastructure, which includes the Data.Array.Accelerate module for constructing array computations, and Data.Array.Accelerate.Interpreter for interpreting them. To actually run an Accelerate computation on a GPU, you will also need a supported GPU card and the accelerate-cuda package; I’ll cover that later in Running on the GPU.

When you have the accelerate package installed, you can start up

GHCi and import the necessary modules:

$ ghci Prelude> import Data.Array.Accelerate as A Prelude A> import Data.Array.Accelerate.Interpreter as I Prelude A I>

As we’ll see, Accelerate shares many concepts with Repa. In particular, array shapes and indices are the same, and Accelerate also has the concept of shape-polymorphic operations like fold.

I mentioned earlier that Accelerate is an embedded domain-specific language for programming GPUs. More specifically, it is a deeply embedded DSL. This means that programs are written in Haskell syntax using operations of the library, but the method by which the program runs is different from a conventional Haskell program. A program fragment that uses Accelerate works like this:

- The Haskell code generates a data structure in an internal representation that the programmer doesn’t get to see.

-

This data structure is then compiled into GPU code using the

accelerate-cudapackage and run directly on the GPU. When you don’t have a GPU, theacceleratepackage interprets the code instead, using Accelerate’s built-in interpreter. Both methods give the same results, but of course running on the GPU should be far faster.

Both steps happen while the Haskell program is running; there’s no extra compile step, apart from compiling the Haskell program itself.

By the magic of Haskell’s overloading and abstraction facilities, the Haskell code that you write using Accelerate usually looks much like ordinary Haskell code, even though it generates another program rather than actually producing the result directly.

While reading this chapter, you probably want to have a copy of the Accelerate API documentation at hand.

As with Repa, Accelerate is a framework for programming with arrays. An Accelerate computation takes arrays as inputs and delivers one or more arrays as output. The type of Accelerate arrays has only two parameters, though:

dataArrayshe

Here, e is the element type, and sh is the shape. There is no representation type. Even though Accelerate does have delayed arrays internally and compositions of array operations are fused in much the same way as in Repa, arrays are not explicitly tagged with a representation type.

Shapes and indices use the same data types as Repa (for more details see Arrays, Shapes, and Indices):

dataZ=Zdatatail:.head=tail:.head

And there are some convenient type synonyms for common shapes:

typeDIM0=ZtypeDIM1=DIM0:.InttypeDIM2=DIM1:.Int

Because arrays of dimensionality zero and one are common, the library provides type synonyms for those:

typeScalare=ArrayDIM0etypeVectore=ArrayDIM1e

You can build arrays and experiment with them in ordinary Haskell code

using fromList:

fromList::(Shapesh,Elte)=>sh->[e]->Arrayshe

As we saw with Repa, we have to be careful to give GHC enough type

information to fix the type of the indices (to Int), and the same is

true in Accelerate. Let’s build a 10-element vector using fromList:

> fromList (Z:.10) [1..10] :: Vector Int Array (Z :. 10) [1,2,3,4,5,6,7,8,9,10]

Similarly, we can make a two-dimensional array, with three rows of five columns:

> fromList (Z:.3:.5) [1..] :: Array DIM2 Int Array (Z :. 3 :. 5) [1,2,3,4,5,6,7,8,9,10,11,12,13,14,15]

The operation for indexing one of these arrays is indexArray:

> let arr = fromList (Z:.3:.5) [1..] :: Array DIM2 Int > indexArray arr (Z:.2:.1) 12

(There is also a ! operator that performs indexing, but unlike

indexArray it can only be used in the context of an Accelerate

computation, which we’ll see shortly.)

One thing to remember is that in Accelerate, arrays cannot be nested; it is impossible to build an array of arrays. This is because arrays must be able to be mapped directly into flat arrays on the GPU, which has no support for nested arrays.

We can, however, have arrays of tuples. For example:

> fromList (Z:.2:.3) (Prelude.zip [1..] [1..]) :: Array DIM2 (Int,Int) Array (Z :. 2 :. 3) [(1,1),(2,2),(3,3),(4,4),(5,5),(6,6)]

Internally, Accelerate will translate an array of tuples into a tuple of arrays; this is done entirely automatically, and we don’t need to worry about it. Arrays of tuples are a very useful structure, as we shall see.

So far, we have been experimenting with arrays in the context of ordinary Haskell code; we haven’t constructed an actual Accelerate computation over arrays yet. An Accelerate computation takes the form run E, where:

run::Arraysa=>Acca->a

The expression E has type Acc a, which means “an accelerated computation that delivers a value of type a.” The

Arrays class allows a to be either an array or a tuple of

arrays. A value of type Acc a is really a data structure (we’ll see

in a moment how to build it), and the run function evaluates the

data structure to produce a result. There are two versions of run:

one exported by Data.Array.Accelerate.Interpreter that we will be

using for experimentation and testing, and another exported by

Data.Array.Accelerate.CUDA (in the accelerate-cuda package) that

runs the computation on the GPU.

Let’s try a very simple example. Starting with the 3×5 array of

Int from the previous section, let’s add one to every element:

> let arr = fromList (Z:.3:.5) [1..] :: Array DIM2 Int > run $ A.map (+1) (use arr) Array (Z :. 3 :. 5) [2,3,4,5,6,7,8,9,10,11,12,13,14,15,16]

Breaking this down, first we call A.map, which is the map function

from Data.Array.Accelerate; recall that we used import

Data.Array.Accelerate as A earlier. We have to use the

qualified name, because there are two map functions in scope:

A.map and Prelude.map.

Here is the type of A.map:

A.map::(Shapeix,Elta,Eltb)=>(Expa->Expb)->Acc(Arrayixa)->Acc(Arrayixb)

A few things will probably seem unusual about this type. First let’s look at the second argument. This is the array

to map over, but rather than just an Array ix a, it is an Acc (Array ix a)—that is, an array in the Accelerate world rather than

the ordinary Haskell world. We need to somehow turn our Array DIM2

Int into an Acc (Array DIM2 Int). This is what the use function

is for:

use::Arraysarrays=>arrays->Accarrays

The use function is the way to take arrays from Haskell and inject

them into an Accelerate computation. This

might actually involve copying the array from the computer’s main

memory into the GPU’s memory.

The first argument to A.map has type Exp a -> Exp b. Here, Exp

is a bit like Acc. It represents a computation in the world of

Accelerate, but whereas Acc is a computation delivering an array,

Exp is a computation delivering a single value.

In the example we passed (+1) as the first argument to map. This

expression is overloaded in Haskell with type Num a => a -> a, and

we’re accustomed to seeing it used at types like Int -> Int and

Double -> Double. Here, however, it is being used at type Exp Int

-> Exp Int; this is possible because Accelerate provides an instance

for Num (Exp a), so expressions built using integer

constants and overloaded Num operations work just fine in the world

of Exp.[22]

Here’s another example, which squares every element in the array:

> run $ A.map (^2) (use arr) Array (Z :. 3 :. 5) [1,4,9,16,25,36,49,64,81,100,121,144,169,196,225]

We can inject values into the Accelerate world with functions such as

use (and some more that we’ll see shortly), but the only way to get

data out of an Accelerate computation is to run it with run, and

then the result becomes available to the caller as an ordinary Haskell

value.

Sometimes we want to use a single value in a place where the API only

allows an array; this is quite common in Accelerate because most

operations are over arrays. For example, the result of run contains

only arrays, not scalars, so if we want to return a single value, we have to

wrap it in an array first. The unit operation is provided for this

purpose:

unit::Elte=>Expe->Acc(Scalare)

Recall that Scalar is a type synonym for Array DIM0; an array with

zero dimensions has only one element. Now we can return a single

value from run:

> run $ unit (3::Exp Int) Array (Z) [3]

The dual to unit is the, which extracts a single value from a

Scalar:

the::Elte=>Acc(Scalare)->Expe

The ! operator indexes into an array:

(!)::(Shapeix,Elte)=>Acc(Arrayixe)->Expix->Expe

Unlike the indexArray function that we saw earlier, the ! operator

works in the Accelerate world; the array argument has type Acc (Array ix e), and the index is an Exp ix. So how do we get from an ordinary index like Z:.3 to an Exp (Z:.Int)? There is a handy

function index1 for exactly this purpose:

index1::ExpInt->Exp(Z:.Int)

So now we can index into an array. Putting these together in GHCi:

> let arr = fromList (Z:.10) [1..10] :: Array DIM1 Int > run $ unit (use arr ! index1 3) Array (Z) [4]

We saw earlier how to create arrays using fromList and then inject them into the Acc world with use. This is not a particularly

efficient way to create arrays. Even if the compiler is clever enough

to optimize away the intermediate list, the array data will still have

to be copied over to the GPU’s memory. So it’s usually better to

create arrays inside Acc. The Accelerate library provides a few

ways to create arrays inside Acc; the simplest one is fill:

fill::(Shapesh,Elte)=>Expsh->Expe->Acc(Arrayshe)

The fill operation creates an array of the specified shape in which

all elements have the same value. We can create arrays in which the

elements are drawn from a sequence by using enumFromN and

enumFromStepN:

enumFromN::(Shapesh,Elte,IsNume)=>Expsh->Expe->Acc(Arrayshe)enumFromStepN::(Shapesh,Elte,IsNume)=>Expsh->Expe->Expe->Acc(Arrayshe)

In enumFromN, the first argument is the shape and the second is the value of the first element. For example, enumFromN (index1 N) M is the same as use (fromList (Z:.N) [M..]).

The enumFromStepN function is the same, except that we can specify

the increment between the element values. For instance, to create a

two-dimensional array of shape three rows of five columns, where the elements

are drawn from the sequence [15,14..]:

> run $ enumFromStepN (index2 3 5) 15 (-1) :: Array DIM2 Int Array (Z :. 3 :. 5) [15,14,13,12,11,10,9,8,7,6,5,4,3,2,1]

Note that we used index2, the two-dimensional version of index1

that we saw earlier, to create the shape argument.

A more general way to create arrays is provided by generate:

generate::(Shapeix,Elta)=>Expix->(Expix->Expa)->Acc(Arrayixa)

This time, the values of the elements are determined by a user-supplied

function from Exp ix to Exp a; that is, the function that will be

applied to each index in the array to determine the element value at

that position. This is exactly like the fromFunction operation

we used in Repa, except that here we must supply a function in the

Exp world rather than an arbitrary Haskell function.

For instance, to create a two-dimensional array in which every element

is given by the sum of its x and y coordinates, we can use

generate:

> run $ generate (index2 3 5) (\ix -> let Z:.y:.x = unlift ix in x + y) Array (Z :. 3 :. 5) [0,1,2,3,4,1,2,3,4,5,2,3,4,5,6]

Let’s look in more detail at the function argument:

\ix->letZ:.y:.x=unliftixinx+y

The function as a whole must have type Exp DIM2 -> Exp Int, and

hence ix has type Exp DIM2. We need to extract the x and y

values from the index, which means we need to deconstruct the Exp

DIM2. The function unlift does this; in general, you should think

of unlift as a way to take apart a structured value inside an Exp.

It works for tuples and indices. In the previous example, we’re using

unlift at the following type:[23]

unlift::Exp(Z:.Int:.Int)->Z:.ExpInt:.ExpInt

The result is a DIM2 value in the Haskell world, so we can pattern

match against Z:.x:.y to extract the x and y values, both of type Exp Int. Then x + y gives us the sum of x and y as an Exp Int, by virtue of the overloaded + operator.

There is a dual to unlift, unsurprisingly called lift, which does

the opposite transformation. In fact, the index2 function that we

used in the generate example earlier is defined in terms of lift:

index2::ExpInt->ExpInt->ExpDIM2index2ij=lift(Z:.i:.j)

This use of lift has the following type:

lift::Z:.ExpInt:.ExpInt->Exp(Z:.Int:.Int)

The lift and unlift functions are essential when we’re working

with indices in Accelerate, and as we’ll see later, they’re useful

for working with tuples as well.

The zipWith function combines two arrays to produce a third array

by applying the supplied function to corresponding elements of the

input arrays:

zipWith::(Shapeix,Elta,Eltb,Eltc)=>(Expa->Expb->Expc)->Acc(Arrayixa)->Acc(Arrayixb)->Acc(Arrayixc)

The first argument is the function to apply to each pair of elements,

and the second and third arguments are the input arrays. For example,

zipping two arrays with (+):

> let a = enumFromN (index2 2 3) 1 :: Acc (Array DIM2 Int) > let b = enumFromStepN (index2 2 3) 6 (-1) :: Acc (Array DIM2 Int) > run $ A.zipWith (+) a b Array (Z :. 2 :. 3) [7,7,7,7,7,7]

Here we zipped together two arrays of identical shape, but what

happens if the shapes are different? The type of zipWith requires

that the input arrays have identical dimensionality, but the sizes of

the dimensions might be different. For example, we’ll use the same

2×3 array as before, but zip it with a 3×5 array containing

elements [10, 20..]:

> let a = enumFromN (index2 2 3) 1 :: Acc (Array DIM2 Int) > let b = enumFromStepN (index2 3 5) 10 10 :: Acc (Array DIM2 Int) > run $ A.zipWith (+) a b Array (Z :. 2 :. 3) [11,22,33,64,75,86]

What happened is that zipWith used the overlapping intersection of

the two arrays. With two-dimensional arrays, you can visualize it like

this: lay one array on top of the other, with their upper-left-hand

corners at the same point, and pair together the elements

that coincide. The final array has the shape of the overlapping

portion of the two arrays.

We saw earlier that simple integer literals and numeric operations are

automatically operations in Exp by virtue of being overloaded. But

what if we already have an Int value and we need an Exp Int? This

is what the function constant is for:

constant::Eltt=>t->Expt

Note that constant works only for instances of Elt,

which you may recall is the class of types allowed to be array

elements, including numeric types, indices, and tuples of Elts.

As our first full-scale example, we’ll again tackle the Floyd-Warshall shortest paths algorithm. For details of the algorithm, please see the Repa example in Example: Computing Shortest Paths; the algorithm here will be identical, except that we’re going to run it on a GPU using Accelerate to see how much faster it goes.

Here are the type of graphs, represented as adjacency matrices:

fwaccel.hs

typeWeight=Int32typeGraph=ArrayDIM2Weight

The algorithm is a sequence of steps, each of which takes a value for

k and a Graph as input and produces a new Graph. First, we’ll

write the code for an individual step before we see how to put

multiple steps together. Here is the code for a step:

step::Acc(ScalarInt)->AccGraph->AccGraphstepkg=generate(shapeg)sp--

wherek'=thek--

sp::ExpDIM2->ExpWeightspix=let(Z:.i:.j)=unliftix--

inA.min(g!(index2ij))--

(g!(index2ik')+g!(index2k'j))

The

stepfunction takes two arguments:k, which is the iteration number, andg, which is the graph produced by the previous iteration. In each step, we’re computing the lengths of the shortest paths between each two elements, using only vertices up tok. The graph from the previous iteration,g, gives us the lengths of the shortest paths using vertices up tok - 1. The result of this step is a newGraph, produced by calling thegeneratefunction. The new array has the same shape asg, and the elements of the array are determined by the functionsp, defined in thewhereclause.

The

kargument is passed in as a scalar array; the sidebar explains why. To extract the value from the array, we callthe.

The

spfunction takes the index of an element in the array and returns the value of the element at that position. We need tounliftthe input index to extract the two components,iandj.

This is the core of the algorithm; to determine the length of the shortest path between

iandj, we take the minimum of the previous shortest path fromitoj, and the path that goes fromitokand then fromktoj. All of these lookups in theggraph are performed using the!operator, and usingindex2to construct the indices.

Now that we have the step function, we can write the wrapper that

composes the sequence of step calls together:

shortestPathsAcc::Int->AccGraph->AccGraphshortestPathsAccng0=foldl1(>->)stepsg0--

wheresteps::[AccGraph->AccGraph]--

steps=[step(unit(constantk))|k<-[0..n-1]]--

First we construct a list of the steps, where each takes a

Graphand delivers aGraph.

The list of steps is constructed by applying

stepto each value ofkin the sequence0 .. n-1, wrapping thekvalues up as scalar arrays usingunitandconstant.

To put the sequence together, Accelerate provides a special operation designed for this task:

(>->)::(Arraysa,Arraysb,Arraysc)=>(Acca->Accb)->(Accb->Accc)->Acca->AcccThis is called the pipeline operator, because it is used to connect two

Acccomputations together in a pipeline, where the output from the first is fed into the input of the second. We could achieve this with simple function composition, but the advantage of using the>->operator is that it tells Accelerate that there is no sharing between the two computations, and any intermediate arrays used by the first computation can be garbage-collected when the second begins. Without this operator, it is possible to fill up memory when running algorithms with many iterations. So ourshortestPathsAccfunction connects together the sequence ofstepcalls by left-folding with>->and then passesg0as the input to the pipeline.

Now that we have defined the complete computation, we can write a function that wraps run around it:

shortestPaths::Graph->GraphshortestPathsg0=run(shortestPathsAccn(useg0))whereZ:._:.n=arrayShapeg0

We can try the program on test data, using the Accelerate interpreter:

> shortestPaths testGraph Array (Z :. 6 :. 6) [0,16,999,13,20,20,19,0,999,5,4,9,11,27,0,24,31,31,18,3, 999,0,7,7,15,4,999,1,0,8,11,17,999,14,21,0]

To run the program on a real GPU, you’ll need a supported GPU card

and some additional software. Consult the Accelerate documentation

to help you get things set up. Then install the

accelerate-cuda package:

$ cabal install accelerate-cuda -fdebug

I’ve enabled debugging support here with the -fdebug flag, which

lets us pass some extra options to the program to see what the GPU is

doing.

To use Accelerate’s CUDA support, we need to use:

importData.Array.Accelerate.CUDA

in place of:

importData.Array.Accelerate.Interpreter

A version of the shortest paths program that has this is in

fwaccel-gpu.hs. Compile it in the usual way:

$ ghc -O2 fwaccel.hs -threaded

The program includes a benchmarking wrapper that generates a large graph over which to run the algorithm. Let’s run it on a graph with 2,000 nodes:[24]

$ ./fwaccel 2000 +RTS -s ... Total time 14.71s ( 16.25s elapsed)

For comparison, I tried the Repa version of this program on a graph of the same size, using seven cores on the same machine:[25]

$ ./fwdense1 2000 +RTS -s -N7 ... Total time 259.78s ( 40.13s elapsed)

So the Accelerate program running on the GPU is significantly faster than Repa. Moreover, about 3.5s of the runtime of the Accelerate program is taken up by initializing the GPU on this machine, which we can see by running the program with a small input size.

When the accelerate-cuda package is compiled with -fdebug, there

are a few extra debugging options available. These are the most useful ones:

-

-dverbose - Prints some information about the type and capabilities of the GPU being used.

-

-ddump-cc - Prints information about CUDA kernels as they are compiled and run. Using this option will tell you whether your program is generating the number of kernels that you were expecting.

For a more complete list, see the accelerate-cuda.cabal file in the

accelerate-cuda package sources.

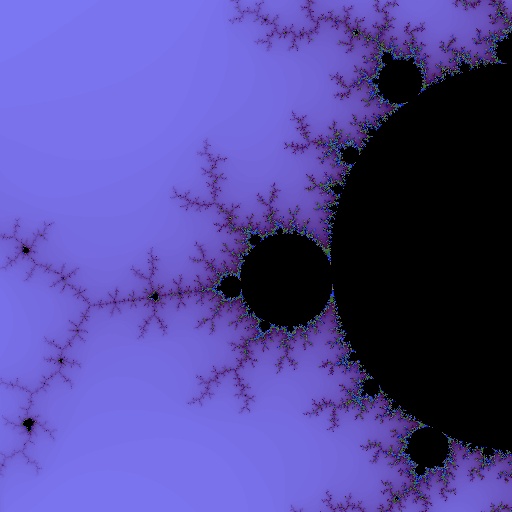

In this second example, we’ll build a Mandelbrot set generator that runs on the GPU. The end result will be the picture in Figure 6-1. Generating an image of the Mandelbrot set is a naturally parallel process—each pixel is independent of the others—but there are some aspects to this problem that make it an interesting example to program using Accelerate. In particular, we’ll see how to use conditionals and to work with arrays of tuples.

The Mandelbrot set is a mathematical construction over the complex plane, which is the two-dimensional plane of complex numbers. A particular point is said to be in the set if, when the following equation is repeatedly applied, the magnitude of z (written as |z|) does not diverge to infinity:

where c is the point on the plane (a complex number), and z0 = c.

In practice, we iterate the equation for a fixed number of times, and if it has not diverged at that point, we declare the point to be in the set. Furthermore, to generate a pretty picture, we remember the iteration at which each point diverged and map the iteration values to a color gradient.

We know that |z| will definitely diverge if it is greater than 2. The magnitude of a complex number x + iy is given by √(x2 + y2), so we can simplify the condition by squaring both sides, giving us this condition for divergence: x2 + y2 > 4.

Let’s express this using Accelerate. First, we want a type for

complex numbers. Accelerate lets us work with tuples, so we can

represent complex numbers as pairs of floating point numbers. Not all

GPUs can work with Doubles, so for the best compatibility we’ll use

Float:

mandel/mandel.hs

typeF=FloattypeComplex=(F,F)typeComplexPlane=ArrayDIM2Complex

We’ll be referring to Float a lot, so the F type synonym helps to

keep things readable.

The following function, next, embodies the main Mandelbrot formula:

it computes the next value of z for a given point c.

next::ExpComplex->ExpComplex->ExpComplexnextcz=c`plus`(z`times`z)

We can’t use the normal + and * operations here, because

there is no instance of Num for Exp Complex. In other words, Accelerate doesn’t know

how to add or multiply our complex numbers, so we have to define these

operations ourselves. First, plus:

plus::ExpComplex->ExpComplex->ExpComplexplusab=...

To sum two complex numbers, we need to sum the components. But

how can we access the components? We cannot pattern match on

Exp Complex. There are a few different ways to do it, and we’ll

explore them briefly. Accelerate provides operations for selecting the

components of pairs in Exp, namely:

fst::(Elta,Eltb)=>Exp(a,b)->Expasnd::(Elta,Eltb)=>Exp(a,b)->Expb

So we could write plus like this:

plus::ExpComplex->ExpComplex->ExpComplexplusab=...whereax=A.fstaay=A.sndabx=A.fstbby=A.sndb

But how do we construct the result? We want to write something like

(ax+bx, ay+by), but this has type (Exp F, Exp F), whereas we want

Exp (F,F). Fortunately the lift function that we saw earlier

performs this transformation, so the result is:

plus::ExpComplex->ExpComplex->ExpComplexplusab=lift(ax+bx,ay+by)whereax=A.fstaay=A.sndabx=A.fstbby=A.sndb

In fact, we could do a little better, since A.fst and A.snd are

just instances of unlift, and we could do them both in one go:

plus::ExpComplex->ExpComplex->ExpComplexplusab=lift(ax+bx,ay+by)where(ax,ay)=unlifta(bx,by)=unliftb

Unfortunately, if you try this you will find that there isn’t enough type information for GHC, so we have to help it out a bit:

plus::ExpComplex->ExpComplex->ExpComplexplusab=lift(ax+bx,ay+by)where(ax,ay)=unlifta::(ExpF,ExpF)(bx,by)=unliftb::(ExpF,ExpF)

We can go a little further because Accelerate provides some utilities

that wrap a function in lift and unlift. For a two-argument

function, the right variant is called lift2:

plus::ExpComplex->ExpComplex->ExpComplexplus=lift2fwheref::(ExpF,ExpF)->(ExpF,ExpF)->(ExpF,ExpF)f(x1,y1)(x2,y2)=(x1+x2,y1+y2)

Unfortunately, again we had to add the type signature to get it to typecheck, but it does aid readability. This is perhaps as close to “natural” as we can get for this definition: the necessary lifting and unlifting are confined to just one place.

We also need to define times, which follows the same pattern as

plus, although of course this time we are multiplying the two

complex numbers together:

times::ExpComplex->ExpComplex->ExpComplextimes=lift2fwheref::(ExpF,ExpF)->(ExpF,ExpF)->(ExpF,ExpF)f(ax,ay)(bx,by)=(ax*bx-ay*by,ax*by+ay*bx)

So now we can compute zn+1 given z and c. But we need to think about the program as a whole. For each point, we need to iterate this process until divergence, and then remember the number of iterations at which divergence happened. This creates a small problem: GPUs are designed to do the same thing to lots of different data at the same time, whereas we want to do something different depending on whether or not a particular point has diverged. So in practice, we can’t do what we would normally do in a single-threaded language and iterate each point until divergence. Instead, we must find a way to apply the same operation to every element of the array for a fixed number of iterations.

There is a conditional operation in Accelerate, with this type:

(?)::Eltt=>ExpBool->(Expt,Expt)->Expt

The first argument is an Exp Bool, and the second argument is a pair

of expressions. If the Boolean evaluates to true, the result is

the first component of the pair; otherwise it is the second.

However, as a rule of thumb, using conditionals in GPU code is considered “bad” because conditionals cause SIMD divergence. This means that when the GPU hits a conditional instruction, it first runs all the threads that take the true branch and then runs the threads that take the false branch. Of course if you have nested conditionals, the amount of parallelism rapidly disappears.

We can’t avoid some kind of conditional in the Mandelbrot

example, but we can make sure there is only a bounded amount of

divergence by having just one conditional per iteration and a fixed

number of iterations. The trick we’ll use is to keep a pair (z,i) for

every array element, where i is the iteration at which that point

diverged. So at each iteration, we do the following:

-

Compute

z' = next c z. -

If it is greater than four, the result is

(z,i). -

Otherwise, the result is

(z',i+1)

The implementation of this sequence is the iter function, defined as

follows:

iter::ExpComplex->Exp(Complex,Int)->Exp(Complex,Int)itercp=let(z,i)=unliftp::(ExpComplex,ExpInt)--

z'=nextcz--

in(dotz'>*4.0)?--

(p--

,lift(z',i+1)--

)

The first thing to do is

unlift pso we can access the components of the pair.

Next, we compute

z'by callingnext.

Now that we have

z'we can do the conditional test using the?operator. Thedotfunction computesx2 +y2 wherexandyare the components ofz; it follows the same pattern asplusandtimesso I’ve omitted its definition.

If the condition evaluates to true, we just return the original

p.

In the false case, then we return the new

z'andi+1.

The algorithm needs two arrays: one array of c values that will

be constant throughout the computation, and a second array of (z,i)

values that will be recomputed by each iteration. Our arrays are

two-dimensional arrays indexed by pixel coordinates because the aim is to

generate a picture from the iteration values at each pixel.

The initial complex plane of c values is generated by a function

genPlane:

genPlane::F->F->F->F->Int->Int->AccComplexPlane

Its definition is rather long so I’ve omitted it here, but

essentially it is a call to generate

(Creating Arrays Inside Acc).

From the initial complex plane we can generate the initial array of

(z,i) values, which is done by initializing each z to the

corresponding c value and i to zero. In the code, this can be

found in the mkinit function.

Now we can put the pieces together and write the code for the complete algorithm:

mandelbrot::F->F->F->F->Int->Int->Int->Acc(ArrayDIM2(Complex,Int))mandelbrotxyx'y'screenXscreenYmax_depth=iterategozs0!!max_depth--

wherecs=genPlanexyx'y'screenXscreenY--

zs0=mkinitcs--

go::Acc(ArrayDIM2(Complex,Int))->Acc(ArrayDIM2(Complex,Int))go=A.zipWithitercs--

csis our static complex plane generated bygenPlane.

zs0is the initial array of(z,i)values.

The function

goperforms one iteration, producing a new array of(z,i), and it is expressed by zippingiterover bothcsand the current array of(z,i).

To perform all the iterations, we simply call the ordinary list function

iterate:iterate::(a->a)->a->[a]and take the element at position

depth, which corresponds to thegofunction having been applieddepthtimes. Note that in this case, we don’t want to use the pipeline operator>->because the iterations share the arraycs.

The complete program has code to produce an output file in PNG format,

by turning the Accelerate array into a Repa array and then using

the repa-devil library that we saw in

Example: Image Rotation. To compile the program, install the

accelerate and accelerate-cuda packages as before, and then:

$ ghc -O2 -threaded mandel.hs

Then generate a nice big image (again, this is running on an Amazon EC2 Cluster GPU instance):

$ rm out.png; ./mandel --size=4000 +RTS -s ... Total time 8.40s ( 10.56s elapsed)

[22] This comes with a couple of extra constraints, which we won’t go into here.

[23] The unlift function is

actually a method of the Unlift class, which has instances for

indices (of any dimensionality) and various sizes of tuples. See the

Accelerate documentation for details.

[24] These results were obtained on an Amazon EC2 Cluster GPU instance that had an NVidia Tesla card. I used CUDA version 4.

[25] Using all eight cores was slower than using seven.

Get Parallel and Concurrent Programming in Haskell now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.