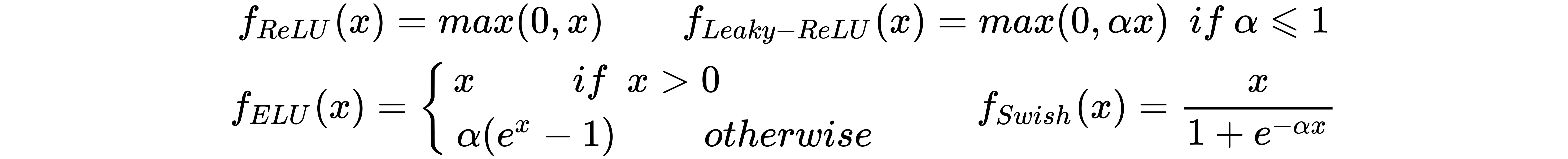

These functions are all linear (or quasi-linear for Swish) when x > 0, while they differ when x < 0. Even if some of them are differentiable when x = 0, the derivative is set to 0 in this case. The most common functions are as follows:

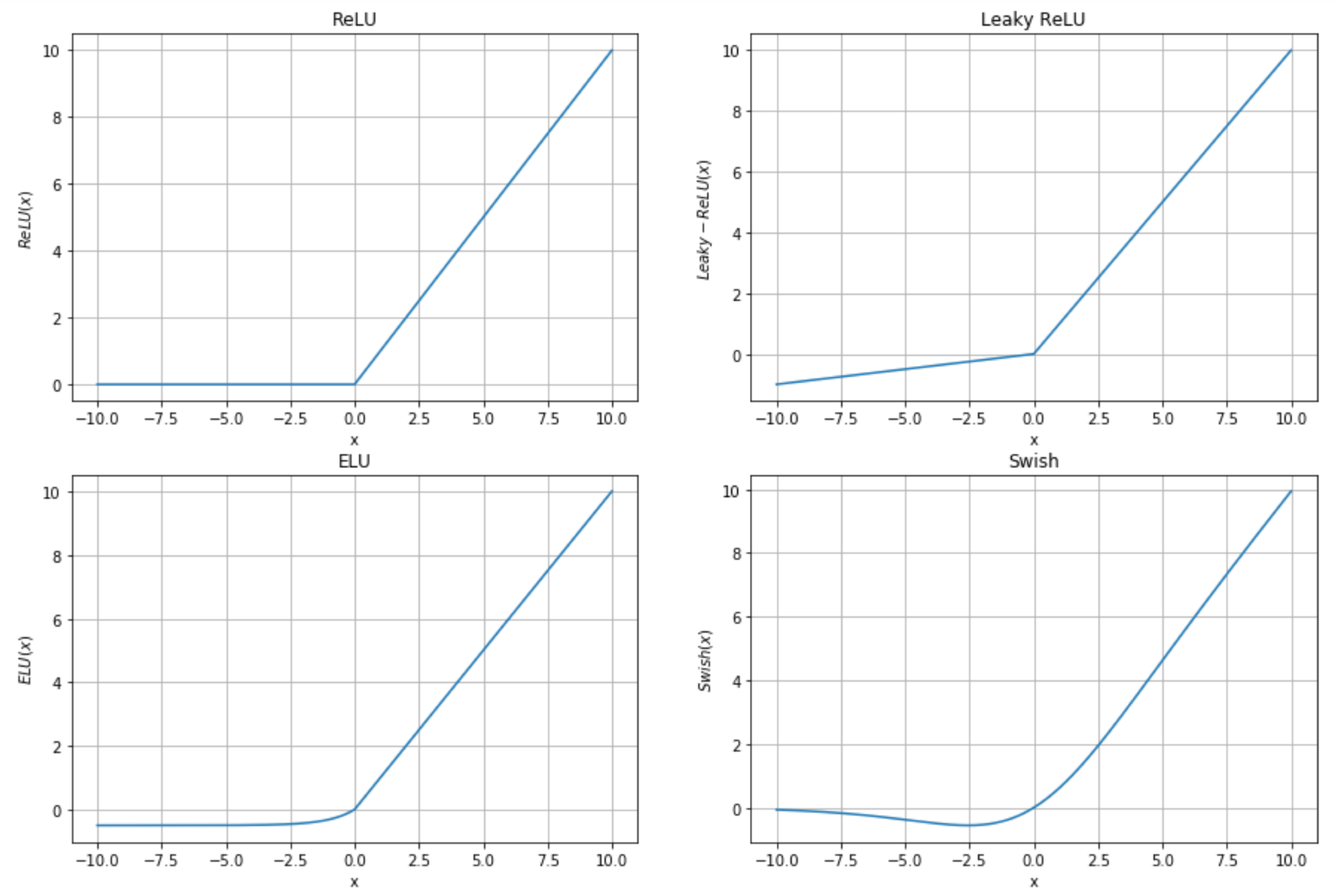

The corresponding plots are shown in the following diagram:

The basic function (and also the most commonly employed) is the ReLU, which has a constant gradient when x > 0, while it is null for x < 0. This function is very often employed in visual processing when the input is normally ...