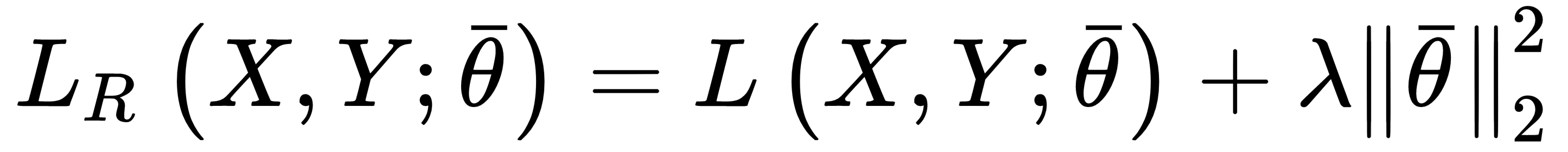

Ridge regularization (also known as Tikhonov regularization) is based on the squared L2-norm of the parameter vector:

This penalty avoids an infinite growth of the parameters (for this reason, it's also known as weight shrinkage), and it's particularly useful when the model is ill-conditioned, or there is multicollinearity, due to the fact that the samples are completely independent (a relatively common condition).

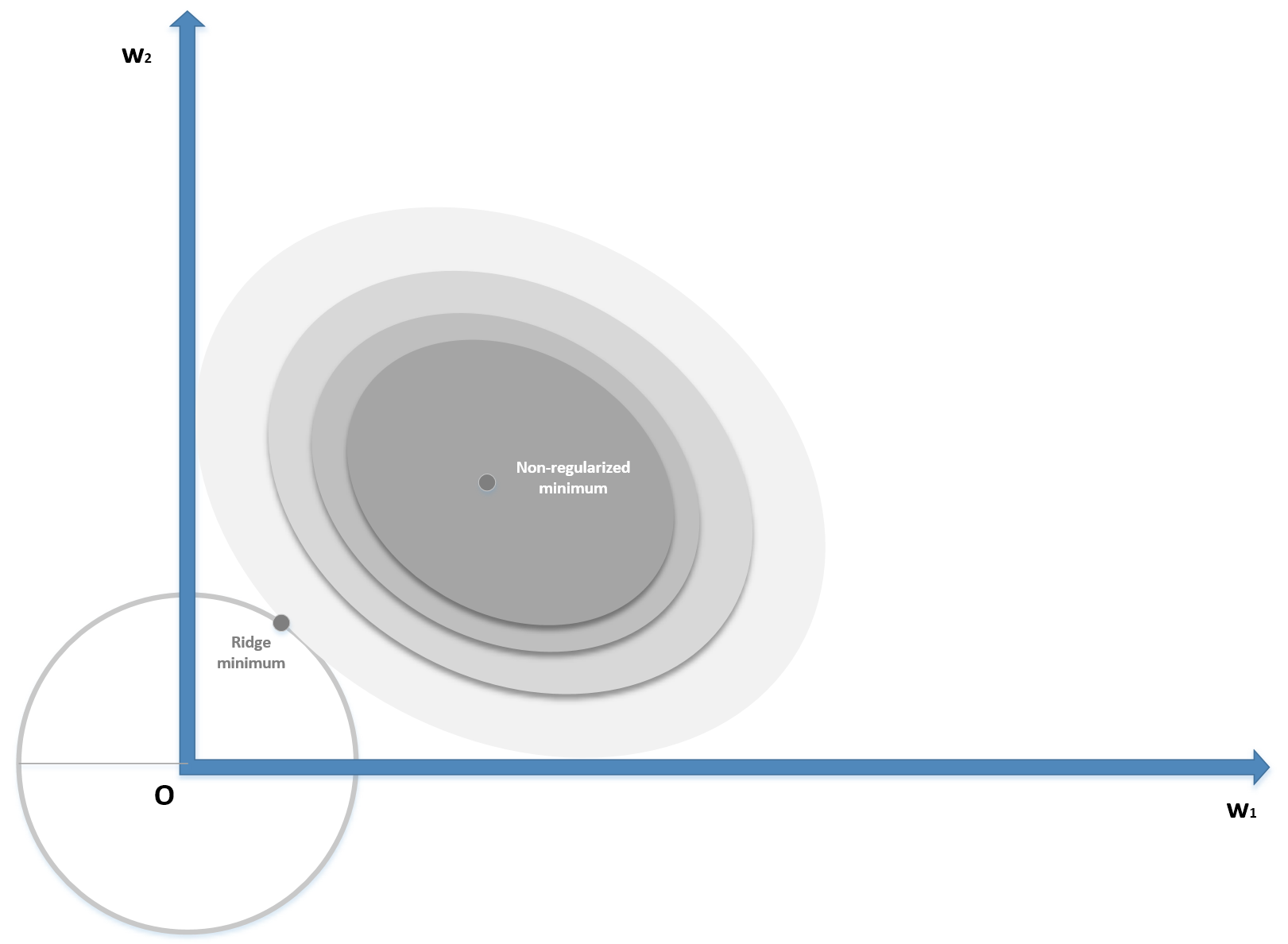

In the following diagram, we see a schematic representation of the Ridge regularization in a bidimensional scenario: