Master is the first component that is started for bootstrapping other worker nodes to form a distributed Spark cluster. You can start a standalone master server by executing the following:

$SPARK_HOME/sbin/start-master.sh -h 127.0.0.1

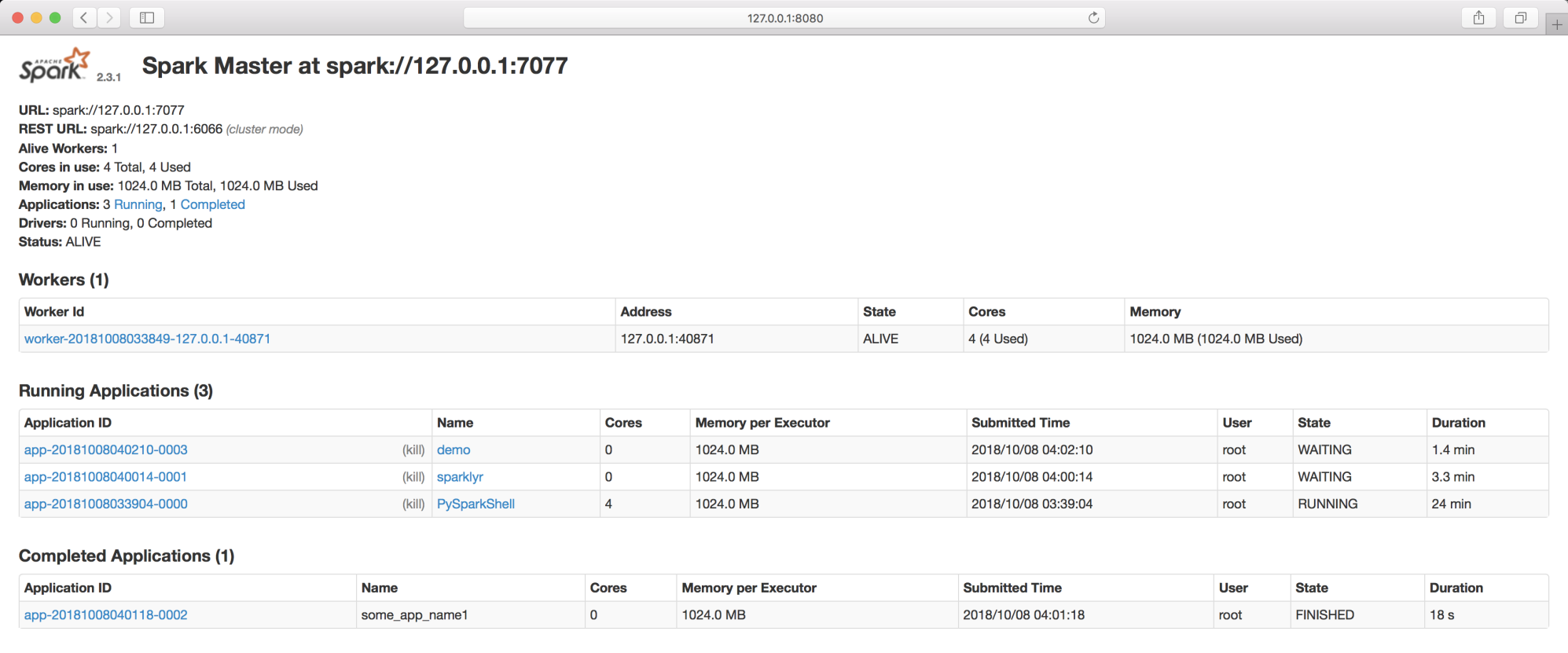

Once it starts, it will print out spark://127.0.0.1:7077, which can be used to connect worker nodes or even pass as a parameter argument to SparkContext; the same will be displayed on the master's web UI (http://127.0.0.1:8080):

As seen in the previous screenshot, demo, sparklyr, and PySparkShell are the active applications that are using the Spark cluster displayed under the ...