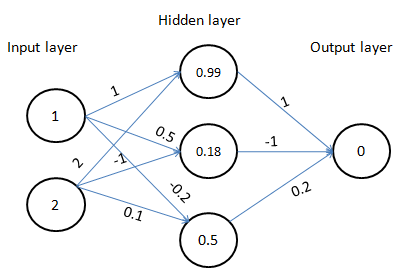

In the previous section, we have seen the intuition of how weights are updated in backpropagation. In this section, we will see the details of how the weight update process works:

In the backpropagation process, we start with the weights at the end of the neural network and work backwards.

In the preceding diagram (1), we iteratively change the values of weights by a small amount (0.01) for each of the weights connecting the hidden layer to the output layer:

|

Original weight |

Changed weight |

Error |

Reduction in error |

|

1 |

1.01 |

0.84261 |

-1.811 |

|

-1 |

-0.99 |

0.849 |

-0.32 |

|

0.2 |

0.21 |

0.837 |

-0.91 |