An increase in the depth of a hidden layer is the same as increasing the number of hidden layers in a neural network.

Typically, for a higher number of hidden units in a hidden layer and/or higher number of hidden layers, the predictions are more accurate.

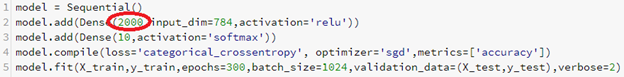

Given that the Adam optimizer or RMSprop has a saturated accuracy after certain number of epochs, let's switch back to stochastic gradient descent to understand the accuracy when the model is run for 300 epochs; but we are using more number of units in the hidden layer this time:

Note that by using 2,000 units in the hidden layer, our accuracy increases to ...