Chapter 1. Introduction to Docker

This chapter introduces the basic concepts and terminology of Docker. You’ll also learn about different scheduler frameworks.

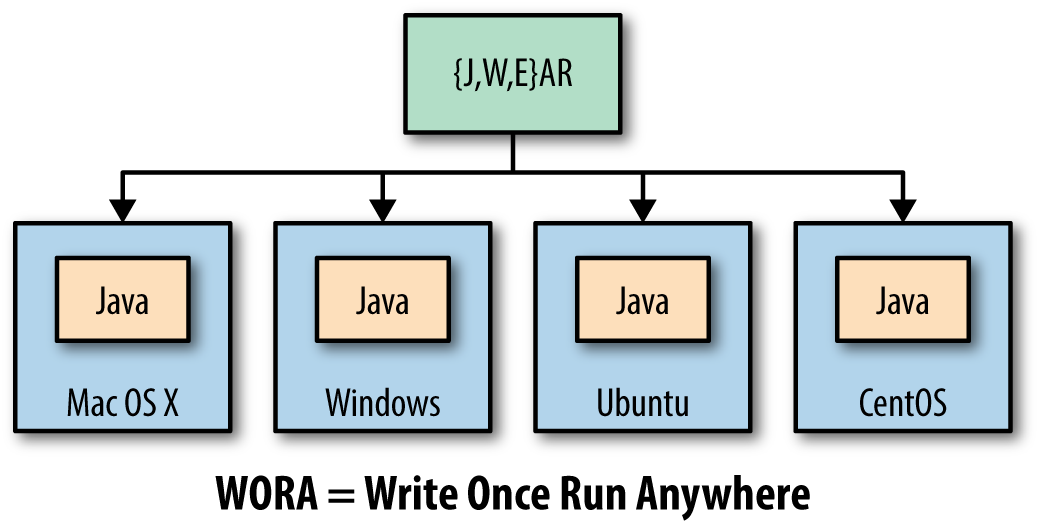

The main benefit of the Java programming language is Write Once Run Anywhere, or WORA, as shown in Figure 1-1. This allows Java source code to be compiled to byte code and run on any operating system where a Java virtual machine is available.

Figure 1-1. Write Once Run Anywhere using Java

Java provides a common API, runtime, and tooling that works across multiple hosts.

Your Java application typically requires an infrastructure such as a specific version of operating system, an application server, JDK, and a database server. It may need binding to specific ports and requires a certain amount of memory. It may need to tune the configuration files and include multiple other dependencies. The application, its dependencies, and infrastructure together may be referred to as the application operating system.

Typically, building, deploying, and running an application requires a script that will download, install, and configure these dependencies. Docker simplifies this process by allowing you to create an image that contains your application and infrastructure together, managed as one component. These images are then used to create Docker containers that run on the container virtualization platform, which is provided by Docker.

Docker simplifies software delivery by making it easy to build, ship, and run distributed applications. It provides a common runtime API, image format, and toolset for building, shipping, and running containers on Linux. At the time of writing, there is no native support for Docker on Windows and OS X.

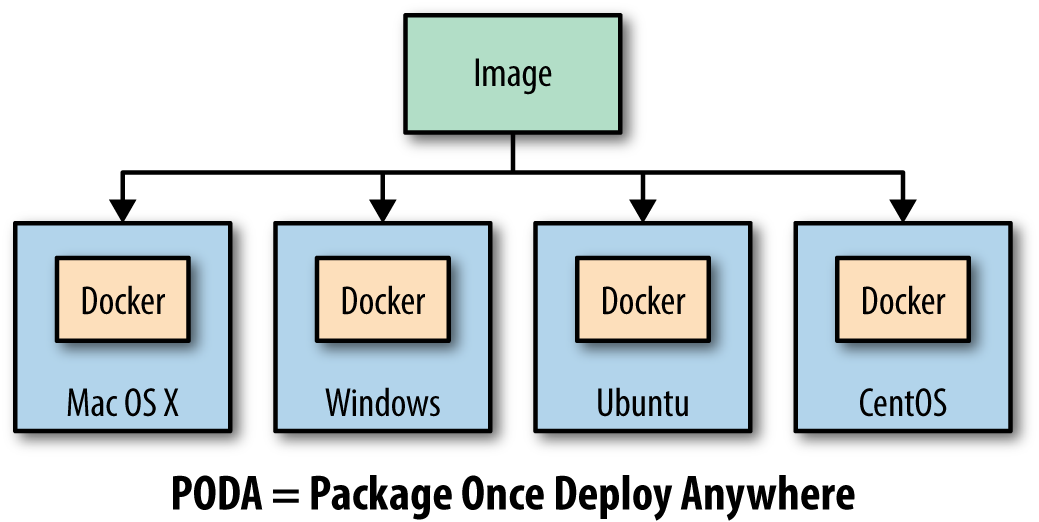

Similar to WORA in Java, Docker provides Package Once Deploy Anywhere, or PODA, as shown in Figure 1-2. This allows a Docker image to be created once and deployed on a variety of operating systems where Docker virtualization is available.

Figure 1-2. Package Once Deploy Anywhere using Docker

Note

PODA is not the same as WORA. A container created using Unix cannot run on Windows and vice versa as the base operating system specified in the Docker image relies on the underlying kernel. However, you can always run a Linux virtual machine (VM) on Windows or a Windows VM on Linux and run your containers that way.

Docker Concepts

Docker simplifies software delivery of distributed applications in three ways:

- Build

-

Provides tools you can use to create containerized applications. Developers package the application, its dependencies and infrastructure, as read-only templates. These are called the Docker image.

- Ship

-

Allows you to share these applications in a secure and collaborative manner. Docker images are stored, shared, and managed in a Docker registry.

Docker Hub is a publicly available registry. This is the default registry for all images.

- Run

-

The ability to deploy, manage, and scale these applications. Docker container is a runtime representation of an image. Containers can be run, started, scaled, stopped, moved, and deleted.

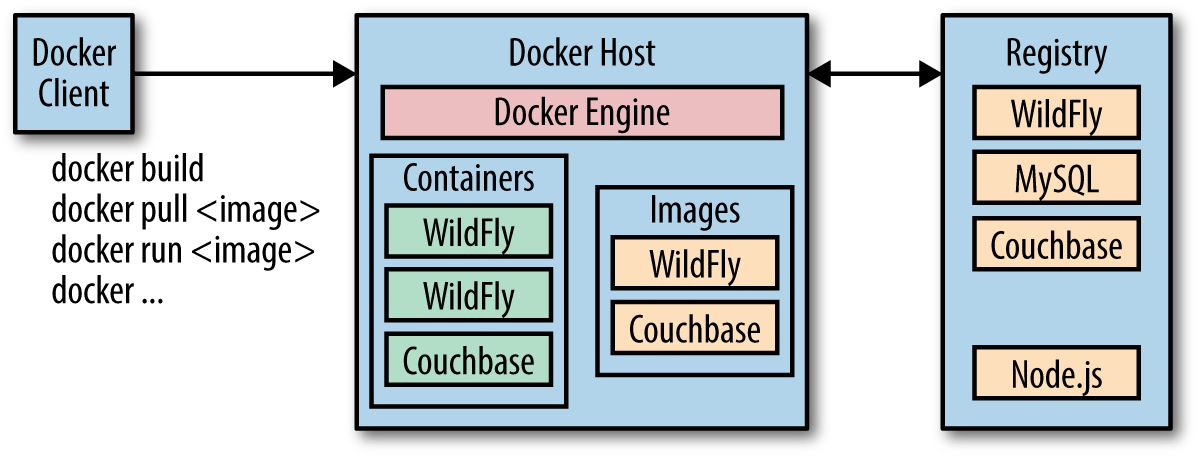

A typical developer workflow involves running Docker Engine on a host machine as shown in Figure 1-3. It does the heavy lifting of building images, and runs, distributes, and scales Docker containers. The client is a Docker binary that accepts commands from the user and communicates back and forth with the Docker Engine.

Figure 1-3. Docker architecture

These steps are now explained in detail:

- Docker host

-

A machine, either physical or virtual, is identified to run the Docker Engine.

- Configure Docker client

-

The Docker client binary is downloaded on a machine and configured to talk to this Docker Engine. For development purposes, the client and Docker Engine typically are located on the same machine. The Docker Engine could be on a different host in the network as well.

- Client downloads or builds an image

-

The client can pull a prebuilt image from the preconfigured registry using the

pullcommand, create a new image using thebuildcommand, or run a container using theruncommand. - Docker host downloads the image from the registry

-

The Docker Engine checks to see if the image already exists on the host. If not, then it downloads the image from the registry. Multiple images can be downloaded from the registry and installed on the host. Each image would represent a different software component. For example, WildFly and Couchbase are downloaded in this case.

- Client runs the container

-

The new container can be created using the

runcommand, which runs the container using the image definition. Multiple containers, either of the same image or different images, run on the Docker host.

Docker Images and Containers

Docker images are read-only templates from which Docker containers are launched. Each image consists of a series of layers. Docker makes use of a union filesystem to combine these layers into a single image. Union filesystems allow files and directories of separate filesystems, known as branches, to be transparently overlaid, forming a single coherent filesystem.

One of the reasons Docker is so lightweight is because of these layers. When you change a Docker image—for example, update an application to a new version—a new layer gets built. Thus, rather than replacing the whole image or entirely rebuilding, as you may do with a VM, only that layer is added or updated. Now you don’t need to distribute a whole new image, just the update, making distributing Docker images faster and simpler.

Docker images are built on Docker Engine, distributed using the registry, and run as containers.

Multiple versions of an image may be stored in the registry using the format image-name:tag. image-name is the name of the image and tag is a version assigned to the image by the user. By default, the tag value is latest and typically refers to the latest release of the image. For example, jboss/wildfly:latest is the image name for the WildFly’s latest release of the application server. A previous version of the WildFly Docker container can be started with the image jboss/wildfly:9.0.0.Final.

Once the image is downloaded from the registry, multiple instances of the container can be started easily using the run command.

Docker Toolbox

Docker Toolbox is the fastest way to get up and running with Docker in development. It provides different tools required to get started with Docker.

The complete set of installation instructions are available from the Docker website as well.

Here is a list of the tools included in the Docker Toolbox:

-

Docker Engine or the

dockerbinary -

Docker Machine or the

docker-machinebinary -

Docker Compose or the

docker-composebinary -

Kitematic, the desktop GUI for Docker

-

A preconfigured shell for invoking Docker commands

-

Oracle VirtualBox

-

Boot2docker ISO

Docker Engine, Docker Machine, and Docker Compose are explained in detail in the following sections. Kitematic is a simple application for managing Docker containers on Mac, Linux, and Windows. Oracle VirtualBox is a free and open source hypervisor for x86 systems. This is used by Docker Machine to create a VirtualBox VM and to create, use, and manage a Docker host inside it. A default Docker Machine is created as part of the Docker Toolbox installation. The preconfigured shell is just a terminal where the environment is configured to the default Docker Machine. Boot2Docker ISO is a lightweight Linux distribution based on Tiny Core Linux. It is used by VirtualBox to provision the VM.

Get a more native experience with the preview version of Docker for Mac or Windows.

Let’s look at some tools from Docker Toolbox in detail now.

Docker Engine

Docker Engine is the central piece of Docker. It is a lightweight runtime that builds and runs your Docker containers. The runtime consists of a daemon that communicates with the Docker client and execute commands to build, ship, and run containers.

Docker Engine uses Linux kernel features like cgroups, kernel namespaces, and a union-capable filesystem. These features allow the containers to share a kernel and run in isolation with their own process ID space, filesystem structure, and network interfaces.

Docker Engine is supported on Linux, Windows, and OS X.

On Linux, it can typically be installed using the native package manager. For example, yum install docker-engine will install Docker Engine on CentOS.

On Windows and Mac, it is installed using Docker Machine. This is explained in the section “Docker Machine”. Alternatively, Docker for Mac or Windows provides a native experience on these platforms, available in beta at time of this writing.

Docker Machine

Docker Machine allows you to create Docker hosts on your computer, on cloud providers, and inside your own data center. It creates servers, installs Docker on them, and then configures the Docker client to talk to them. The docker-machine CLI comes with Docker Toolbox and allows you to create and manage machines.

Once your Docker host has been created, it then has a number of commands for managing containers:

-

Start, stop, restart container

-

Upgrade Docker

-

Configure the Docker client to talk to a host

Commonly used commands for Docker Machine are listed in Table 1-1.

| Command | Purpose |

|---|---|

|

Create a machine |

|

List machines |

|

Display the commands to set up the environment for the Docker client |

|

Stop a machine |

|

Remove a machine |

|

Get the IP address of the machine |

The complete set of commands for the docker-machine binary can be found using the command docker-machine --help.

Docker Machine uses a driver to provision the Docker host on a local network or on a cloud. By default, at the time of writing, Docker Machine created on a local machine uses boot2docker as the operating system. Docker Machine created on a remote cloud provider uses "Ubuntu LTS" as the operating system.

Installing Docker Toolbox creates a Docker Machine called default.

Docker Machine can be easily created on a local machine as shown here:

docker-machine create -d virtualbox my-machine

The machine created using this command uses the VirtualBox driver and my-machine as the machine’s name.

The Docker client can be configured to give commands to the Docker host running on this machine as shown in Example 1-1.

Example 1-1. Configure Docker client for Docker Machine

eval $(docker-machine env my-machine)

Any commands from the docker CLI will now run on this Docker Machine.

Docker Compose

Docker Compose is a tool that allows you to define and run applications with one or more Docker containers. Typically, an application would consist of multiple containers such as one for the web server, another for the application server, and another one for the database. With Compose, a multi-container application can be easily defined in a single file. All the containers required for the application can be then started and managed with a single command.

With Docker Compose, there is no need to write scripts or use any additional tools to start your containers. All the containers are defined in a configuration file using services, and then docker-compose script is used to start, stop, restart, and scale the application and all the services in that application, and all the containers within that service.

Commonly used commands for Docker Compose are listed in Table 1-2.

| Command | Purpose |

|---|---|

|

Create and start containers |

|

Restart services |

|

Build or rebuild services |

|

Set number of containers for a service |

|

Stop services |

|

Kill containers |

|

View output from containers |

|

List containers |

The complete set of commands for the docker-compose binary can be found using the command docker-compose --help.

The Docker Compose file is typically called docker-compose.yml. If you decide to use a different filename, it can be specified using the -f option to docker-compose script.

All the services in the Docker Compose file can be started as shown here:

docker-compose up -d

This starts all the containers in the service in a background, or detached mode.

Docker Swarm

An application typically consists of multiple containers. Running all containers on a single Docker host makes that host a single point of failure (SPOF). This is undesirable in any system because the entire system will stop working, and thus your application will not be accessible.

Docker Swarm allows you to run a multi-container application on multiple hosts. It allows you to create and access a pool of Docker hosts using the full suite of Docker tools. Because Docker Swarm serves the standard Docker API, any tool that already communicates with a Docker daemon can use Swarm to transparently scale to multiple hosts. This means an application that consists of multiple containers can now be seamlessly deployed to multiple hosts.

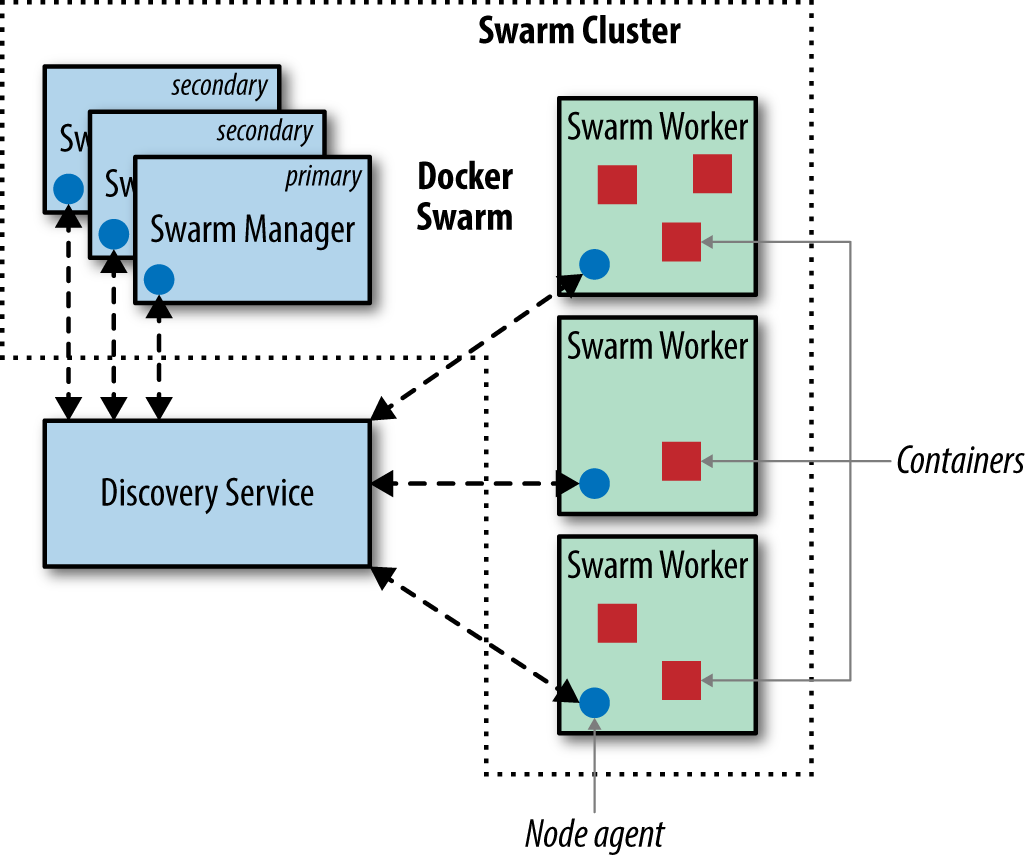

Figure 1-4 shows the main concepts of Docker Swarm.

Figure 1-4. Docker Swarm Architecture

Let’s learn about the key components of Docker Swarm and how they avoid SPOF:

- Swarm manager

-

Docker Swarm has a manager that is a predefined Docker host in the cluster and manages the resources in the cluster. It orchestrates and schedules containers in the entire cluster.

The Swarm manager can be configured with a primary instance and multiple secondary instances for high availability.

- Discovery service

-

The Swarm manager talks to a hosted discovery service. This service maintains a list of IPs in the Swarm cluster. Docker Hub hosts a discovery service that can be used during development. In production, this is replaced by other services such as

etcd,consul, orzookeeper. You can even use a static file. This is particularly useful if there is no Internet access or you are running in a closed network. - Swarm worker

-

The containers are deployed on nodes that are additional Docker hosts. Each node must be accessible by the manager. Each node runs a Docker Swarm agent that registers the referenced Docker daemon, monitors it, and updates the discovery services with the node’s status.

- Scheduler strategy

-

Different scheduler strategies (

spread(default),binpack, andrandom) can be applied to pick the best node to run your container. The default strategy optimizes the node for the least number of running containers. There are multiple kinds of filters, such as constraints and affinity. A combination of different filters allow for creating your own scheduling algorithm. - Standard Docker API

-

Docker Swarm serves the standard Docker API and thus any tool that talks to a single Docker host will seamlessly scale to multiple hosts. This means that a multi-container application can now be easily deployed on multiple hosts configured through Docker Swarm cluster.

Docker Machine and Docker Compose are integrated with Docker Swarm. Docker Machine can participate in the Docker Swarm cluster using --swarm, --swarm-master, --swarm-strategy, --swarm-host, and other similar options. This allows you to easily create a Docker Swarm sandbox on your local machine using VirtualBox.

An application created using Docker Compose can be targeted to a Docker Swarm cluster. This allows multiple containers in the application to be distributed across multiple hosts, thus avoiding SPOF.

Multiple containers talk to each other using an overlay network. This type of network is created by Docker and supports multihost networking natively out-of-the-box. It allows containers to talk across hosts.

If the containers are targeted to a single host then a bridge network is created, which only allows the containers on that host to talk to each other.

Kubernetes

Kubernetes is an open source orchestration system for managing containerized applications. These can be deployed across multiple hosts. Kubernetes provides basic mechanisms for deployment, maintenance, and scaling of applications. An application’s desired state, such as “3 instances of WildFly” or “2 instances of Couchbase,” can be specified declaratively. And Kubernetes ensures that the state is maintained.

Kubernetes is a container-agnostic system and Docker is one of the container formats supported.

The main application concepts in Kubernetes are explained below:

- Pod

-

The smallest deployable units that can be created, scheduled, and managed. It’s a logical collection of containers that belong to an application. An application would typically consist of multiple pods.

Each resource in Kubernetes is defined using a configuration file. For example, a Couchbase pod can be defined as shown here:

apiVersion:v1kind:Pod# labels attached to this podmetadata:labels:name:couchbase-podspec:containers:-name:couchbase# Docker image that will run in this podimage:couchbaseports:-containerPort:8091Each pod is assigned a unique IP address in the cluster. The

imageattribute defines the Docker image that will be included in this pod. - Labels

-

A label is a key/value pair that is attached to objects, such as pods. Multiple labels can be attached to a resource. Labels can be used to organize and to select subsets of objects. Labels defined identifying for the object and is only meaningful and relevant to the user.

In the previous example,

metadata.labelsdefine the labels attached to the pod. - Replication controller

-

A replication controller ensures that a specified number of pod replicas are running on worker nodes at all times. It allows both up- and down-scaling of the number of replicas. Pods inside a replication controller are re-created when the worker node reboots or otherwise fails.

A replication controller creates two instances of a Couchbase pod can be defined as shown here:

apiVersion:v1kind:ReplicationControllermetadata:name:couchbase-controllerspec:# Two replicas of the pod to be createdreplicas:2# Identifies the label key and value on the Pod# that this replication controller is responsible# for managingselector:app:couchbase-rc-pod# "cookie cutter" used for creating new pods when# necessarytemplate:metadata:labels:# label key and value on the pod.# These must match the selector above.app:couchbase-rc-podspec:containers:-name:couchbaseimage:couchbaseports:-containerPort:8091 - Service

-

Each pod is assigned a unique IP address. If the pod is inside a replication controller, then it is re-created but may be given a different IP address. This makes it difficult for an application server such as WildFly to access a database such as Couchbase using its IP address.

A service defines a logical set of pods and a policy by which to access them. The IP address assigned to a service does not change over time, and thus can be relied upon by other pods. Typically the pods belonging to a service are defined by a label

selector.For example, a Couchbase service might be defined as shown here:

apiVersion:v1kind:Servicemetadata:name:couchbase-servicelabels:app:couchbase-service-podspec:ports:-port:8091# label keys and values of the pod started elsewhereselector:app:couchbase-rc-podNote that the labels used in

selectormust match the metadata used for the pods created by the replication controller.

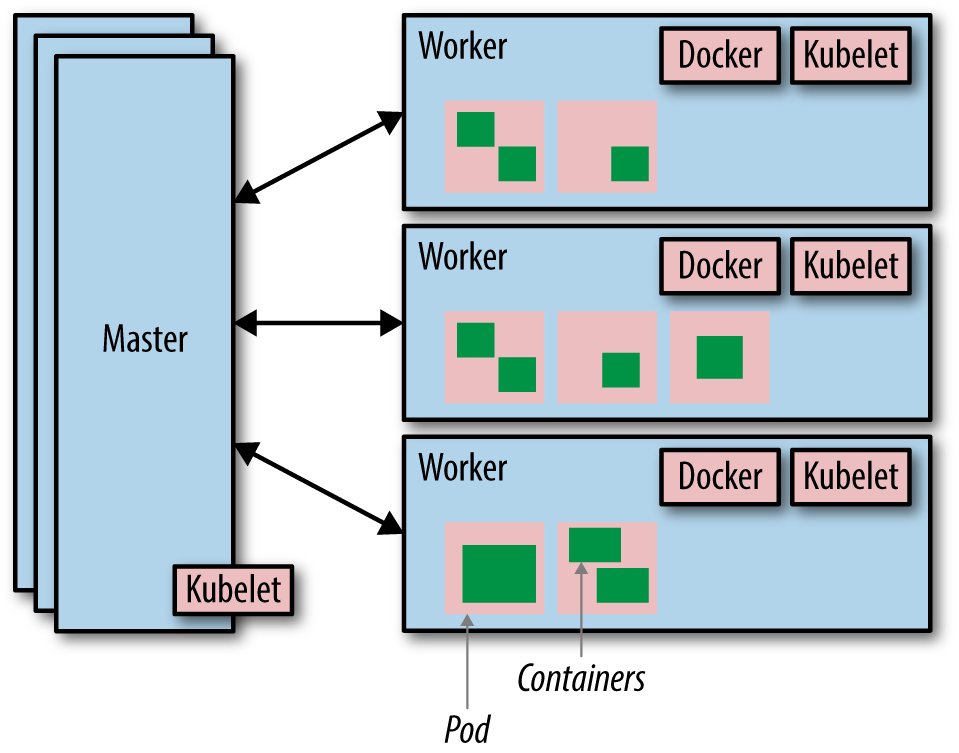

So far, we have learned the application concepts of Kubernetes. Let’s look at some of the system concepts of Kubernetes, as shown in Figure 1-5.

Figure 1-5. Kubernetes architecture

Let’s break down the pieces of Kubernetes architecture:

- Cluster

-

A Kubernetes cluster is a set of physical or virtual machines and other infrastructure resources that are used to run your applications. The machines that manage the cluster are called masters and the machines that run the containers are called workers.

- Node

-

A node is a physical or virtual machine. It has the necessary services to run application containers.

A master node is the central control point that provides a unified view of the cluster. Multiple masters can be set up to create a high-availability cluster.

A worker node runs tasks as delegated by the master; it can run one or more pods.

- Kubelet

-

This is a service running on each node that allows you to run containers and be managed from the master. This service reads container manifests as YAML or JSON files that describe the application. A typical way to provide this manifest is using the configuration file.

A Kubernetes cluster can be started easily on a local machine for development purposes. It can also be started on hosted solutions, turn-key cloud solutions, or custom solutions.

Kubernetes can be easily started on Google Cloud using the following command:

curl -sS https://get.k8s.io | bash

The same command can be used to start Kubernetes on Amazon Web Services, Azure, and other cloud providers; the only difference is that the environment variable KUBERNETES_PROVIDER needs to be set to aws.

The Kubernetes Getting Started Guides provide more details on setup.

Other Platforms

Docker Swarm allows multiple containers to run on multiple hosts. Kubernetes provides an alternative to running multi-container applications on multiple hosts. This section lists some other platforms allow you to run multiple containers on multiple hosts.

Apache Mesos

Apache Mesos provides high-level building blocks by abstracting CPU, memory, storage, and other resources from machines (physical or virtual). Multiple applications that use these blocks to provide resource isolation and sharing across distributed applications can run on Mesos.

Marathon is one such framework that provides container orchestration. Docker containers can be easily managed in Marathon. Kubernetes can also be started as a framework on Mesos.

Amazon EC2 Container Service

Amazon EC2 Container Service (ECS) is a highly scalable and high-performance container management service that supports Docker containers. It allows you to easily run applications on a managed cluster of Amazon EC2 instances.

Amazon ECS lets you launch and stop container-enabled applications with simple API calls, allows you to get the state of your cluster from a centralized service, and gives you access to many familiar Amazon EC2 features such as security groups, elastic load balancing, and EBS volumes.

Docker containers run on AMIs hosted on EC2. This eliminates the need to operate your own cluster management systems or worry about scaling your management infrastructure.

More details about ECS are available from Amazon’s ECS site.

Rancher Labs

Rancher Labs develops software that makes it easy to deploy and manage containers in production. They have two main offerings—Rancher and RancherOS.

Rancher is a container management platform that natively supports and manages your Docker Swarm and Kubernetes clusters. Rancher takes a Linux host, either a physical machine or virtual machine, and makes its CPU, memory, local disk storage, and network connectivity available on the platform. Users can now choose between Kubernetes and Swarm when they deploy environments. Rancher automatically stands up the cluster, enforces access control policies, and provides a complete UI for managing the cluster.

RancherOS is a barebones operating system built for running containers. Everything else is pulled dynamically through Docker.

Joyent Triton

Triton by Joyent virtualizes the entire data center as a single, elastic Docker host. The Triton data center is built using Solaris Zones but offers an endpoint that serves the Docker remote API. This allows Docker CLI and other tools that can talk to this API to run containers easily.

Triton can be used as a service from Joyent or installed as on-premise from Joyent. You can also download the open source version and run it yourself.

Red Hat OpenShift

OpenShift is Red Hat’s open source PaaS platform. OpenShift 3 uses Docker and Kubernetes for container orchestration. It provides a holistic and simplistic experience of provisioning, building, and deploying your applications in a self-service fashion.

It provides automated workflows, such as source-to-image (S2I), that takes the source code from version control systems and converts them into ready-to-run, Docker-formatted images. It also integrates with continuous integration and delivery tools, making it an ideal solution for any development team.

Get Docker for Java Developers now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.