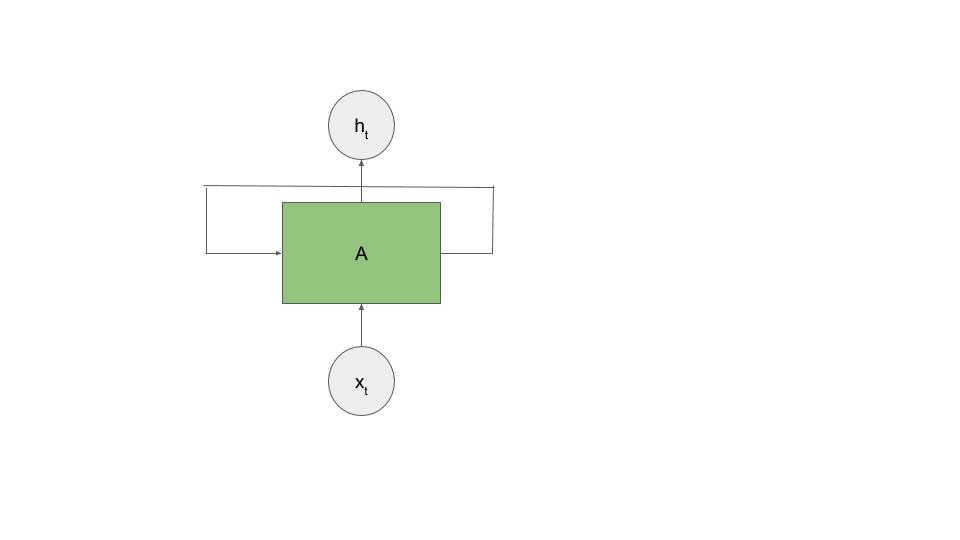

Recurrent neural networks have loops, which allow information to persist from one prediction to the next. This means that the output for each neuron depends on both the current input, and the previous outputs of the network, as shown in the following image:

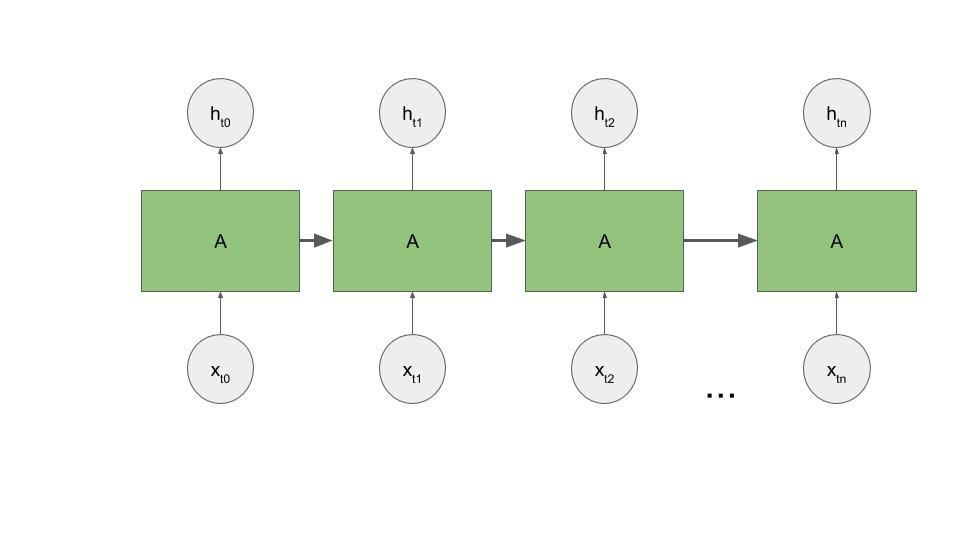

If we were to flatten this diagram out across time, it would look more like the following graph. This idea of the network informing itself is where the term recurrent comes from, although as a CS major I always think of it as a recursive neural network.

In ...