Chapter 2. Agile Tools

This chapter will briefly introduce our software stack. This stack is optimized for the process of doing agile data science.

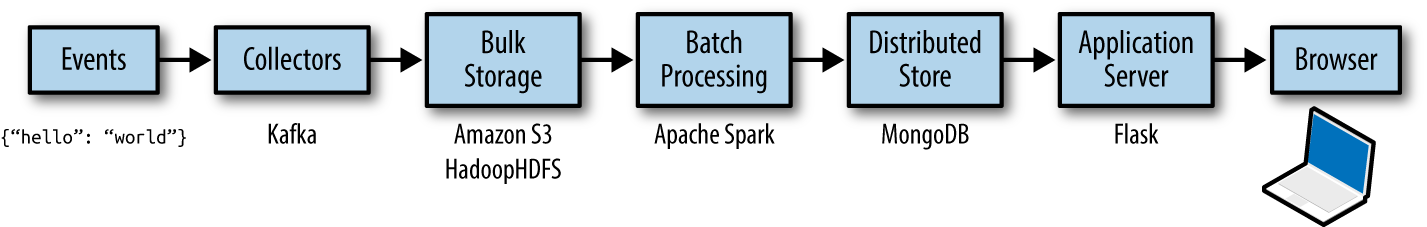

By the end of this chapter, you’ll be collecting, storing, processing, publishing, and decorating data (Figure 2-1). Our stack enables one person to do all of this, to go “full stack.”

Figure 2-1. The software stack process

Full-stack skills are some of the most in demand for data scientists. We’ll cover a lot here, and quickly, but don’t worry: I will continue to demonstrate this software stack in Chapters 5 through 9. You need only understand the basics now; you will get more comfortable later.

We’ll begin with instructions for running the stack in local mode on your own machine. In the next chapter, you’ll learn how to scale this same stack in the cloud via Amazon Web Services. Let’s get started!

Code examples for this chapter are available at Agile_Data_Code_2/ch02. Clone the repository and follow along!

git clone https://github.com/rjurney/Agile_Data_Code_2.git

Scalability = Simplicity

As NoSQL tools like Spark, Hadoop, and MongoDB, data science, and big data have developed, much focus has been placed on the plumbing of analytics applications. However, this is not a book about infrastructure. This book teaches you to build applications that use such infrastructure. Once our stack has been introduced, we will take this plumbing for granted ...

Get Agile Data Science 2.0 now with the O’Reilly learning platform.

O’Reilly members experience books, live events, courses curated by job role, and more from O’Reilly and nearly 200 top publishers.